MuleSoft Agent Fabric adds new ways to keep AI agents in line

The Challenge of Agent Sprawl in the Modern Enterprise

As organizations move beyond experimental generative AI toward functional agentic workflows, they often find themselves managing a fragmented landscape of disconnected tools. Different departments—ranging from customer service and sales to human resources and finance—may deploy specialized AI agents from various vendors. Without a centralized management layer, these agents often operate in silos, leading to redundant workflows, inconsistent data usage, and a lack of transparency regarding operational costs.

This "agent sprawl" mirrors the "shadow IT" challenges of previous decades but with higher stakes. Because agentic AI systems possess the ability to perform actions—such as updating records, communicating with customers, or triggering financial transactions—the lack of centralized governance introduces significant risks. Salesforce’s latest initiatives are designed to provide the "choke point" necessary for enterprises to scale AI safely, ensuring that every agent, whether built on Salesforce or a third-party platform, adheres to corporate policies and remains cost-effective.

A Chronology of Salesforce’s Agentic Evolution

The journey toward centralized agent governance began in earnest in September 2025, when Salesforce first introduced Agent Fabric. At its inception, the tool served as a registry where enterprises could view and interconnect agents. The roadmap has since accelerated to meet market demands for tighter controls:

- September 2025: Launch of Agent Fabric within the MuleSoft Anypoint Platform, establishing a unified registry for agent discovery and interconnection.

- January 2026: Introduction of deterministic scripting tools and automated "agent scanners" designed to detect and register new AI agents appearing on the network.

- Current Phase (Mid-2026): Expansion of deterministic controls via "Agent Script for Agent Broker" and the general availability of centralized LLM governance through the AI Gateway.

- Future Roadmap: The deterministic orchestration features, currently in beta, are scheduled for general availability in June 2026, providing developers with a long-term framework for multi-agent coordination.

Deterministic Controls: Balancing Reasoning with Reliability

One of the most critical updates to the Salesforce ecosystem is the introduction of Agent Script for Agent Broker. In the world of artificial intelligence, models are inherently "probabilistic," meaning they generate outputs based on statistical likelihood. While this allows for human-like reasoning, it can lead to unpredictability in production environments where specific, repeatable outcomes are required.

Agent Script introduces a "deterministic" layer to this process. By allowing developers to codify specific workflows and "if-then" rules, the system can steer an AI agent’s decision-making process according to predetermined business logic. This approach reduces the reliance on large language models (LLMs) for every step of a task, which not only increases reliability but also lowers computational costs.

Robert Kramer, managing partner at KramerERP, emphasizes that pure autonomy is often a liability in high-stakes enterprise environments. "Pure autonomous agents don’t necessarily work in production as enterprises need to ensure predictable outcomes," Kramer noted. He suggests that these new deterministic controls allow for a "secure handoff" where the system follows strict rules for compliance-heavy tasks while still utilizing the model’s reasoning capabilities for nuanced interactions.

Rebecca Wettemann, principal analyst at Valoir, views this as a cost-saving measure as much as a performance enhancer. By providing both deterministic and probabilistic options, Salesforce allows developers to choose the "lower-cost route" for tasks that do not require the full creative power of an LLM, thereby optimizing the return on investment for AI deployments.

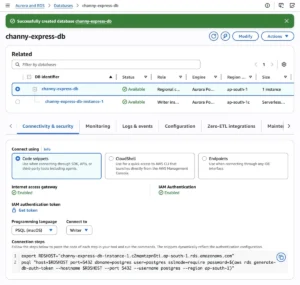

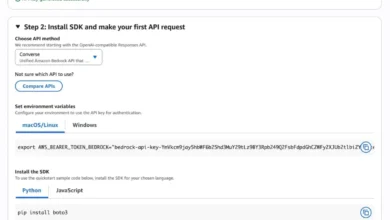

Centralized LLM Governance and the AI Gateway

As AI usage scales, so do the associated costs. Many organizations have struggled with "bill shock" resulting from unmonitored API calls to third-party LLM providers like OpenAI, Anthropic, or Google. Salesforce’s response is the AI Gateway, a control layer within Agent Fabric that has now been bolstered with new LLM Governance capabilities.

Generally available as of this update, the LLM Governance feature provides centralized visibility into token usage, data flows, and real-time costs across all third-party models used within the enterprise. This allows CIOs to move away from a fragmented model where individual teams manage their own API contracts and budgets.

Scott Bickley, an advisory fellow at Info-Tech Research Group, warns that without this centralized "choke point," companies face inconsistent security postures and no way to enforce enterprise-wide policy. "By positioning AI Gateway as the point through which all LLM traffic flows, enterprises gain visibility into AI usage patterns, the models in use, the purpose of the usage, and cost data," Bickley explained. This transparency is vital for justifying the spiraling costs often associated with large-scale AI integration.

Bridging the Gap Between Legacy Systems and AI

A significant hurdle in the adoption of AI agents is their inability to communicate with older, "legacy" technology. Most existing enterprise infrastructure relies on REST, SOAP, or GraphQL APIs built long before the advent of modern AI standards. To solve this, Salesforce has introduced features based on the Model Context Protocol (MCP), an open standard designed to simplify how AI agents interact with external data and services.

The new "MCP Bridge" acts as a translator, allowing agents that speak the MCP language to access legacy APIs without requiring developers to rewrite underlying code. This "wrapper" approach saves thousands of hours of engineering time, enabling AI agents to pull data from decades-old databases or legacy ERP systems as if they were modern, AI-native tools.

Furthermore, Salesforce announced a partnership for Informatica-hosted MCPs. This integration brings built-in data quality and governance directly into the agentic workflow. For industries with heavy regulatory requirements, such as healthcare or finance, ensuring that an AI agent is pulling high-quality, "clean" data is paramount. However, experts like Bickley advise caution, noting that data quality checks are not instantaneous. Checking for deduplication and cross-system matching can introduce latency, potentially slowing down the real-time responsiveness of the AI agent.

Strategic Implications: The Evolution of MuleSoft

The expansion of Agent Fabric signals a broader strategic move for Salesforce. By layering orchestration, governance, and connectivity into a single platform, the company is positioning MuleSoft as the "system of record" for the AI era. This moves MuleSoft beyond simple API management and into the realm of core AI infrastructure.

However, this shift brings about questions regarding vendor lock-in. As enterprises define their orchestration rules and governance policies within Salesforce’s Agent Fabric, the cost and complexity of switching to a different provider increase. Scott Bickley suggests that CIOs must remain vigilant about their "exit path."

"If your agent control plane runs on Agent Fabric, switching costs rise materially," Bickley warned. He advises IT leaders to evaluate what components of the system are truly portable and what the long-term pricing model looks like, especially when integrating non-Salesforce agents and data sources.

Conclusion: The Path Toward a Governed AI Workforce

The updates to Salesforce’s Agent Fabric represent a maturing of the AI market. The initial excitement surrounding the "magic" of generative AI is being replaced by a pragmatic need for control, predictability, and fiscal responsibility. By providing a suite of tools that address agent sprawl, provide deterministic safeguards, and centralize cost management, Salesforce is attempting to provide the "guardrails" necessary for the next phase of digital transformation.

As enterprises wait for the full suite of features to become generally available in mid-2026, the focus remains on building a foundation that can support an increasingly autonomous workforce. The success of these tools will likely be measured by their ability to harmonize the chaotic "wild west" of early AI adoption into a disciplined, governed, and ultimately profitable enterprise asset.