The Compute Explosion and the Path to Cognitive Abundance: How Exponential Trends are Redefining Artificial Intelligence

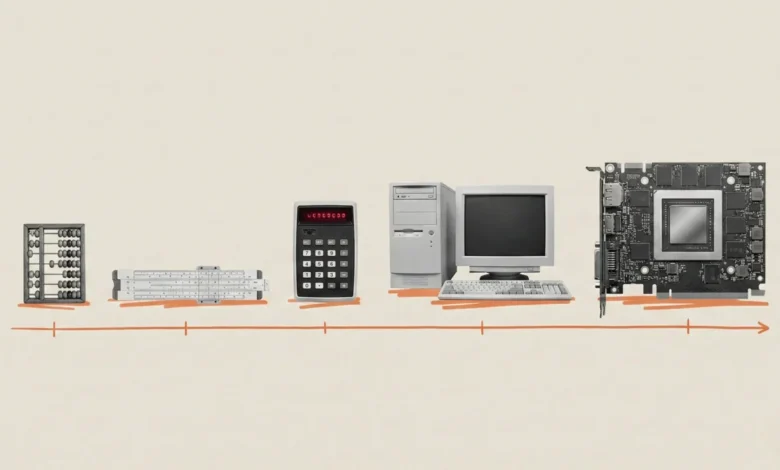

The human brain is biologically predisposed to perceive the world through a linear lens, an evolutionary trait honed on the savannah where distance and effort correlated directly with time. In a linear reality, walking for two hours covers exactly twice the distance of walking for one. However, this ingrained intuition is increasingly becoming a liability when attempting to comprehend the trajectory of artificial intelligence. According to Mustafa Suleyman, CEO of Microsoft AI, the fundamental drivers of AI are not merely growing; they are undergoing an exponential explosion that defies traditional expectations. Since 2010, the computational power—measured in floating-point operations, or "flops"—required to train frontier AI models has increased by a staggering one trillion times. This leap from early systems operating at 10¹⁴ flops to modern frontier models exceeding 10²⁶ flops represents a shift so profound that it necessitates a complete re-evaluation of the technological landscape.

The Convergence of Hardware and Architectural Innovation

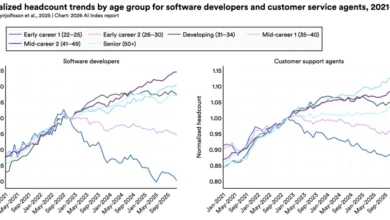

The rapid acceleration of AI capabilities is not the result of a single breakthrough but rather the convergence of three distinct technological vectors: raw processor speed, data transmission efficiency, and massive-scale networking. Skeptics frequently point to the slowing of Moore’s Law—the observation that the number of transistors on a microchip doubles approximately every two years—as evidence that AI progress will soon hit a plateau. Yet, the reality of the "compute ramp" suggests that architectural innovations are outstripping the limitations of silicon alone.

The first vector is the raw performance of the "calculators" themselves. Nvidia, the current market leader in AI hardware, has delivered a sevenfold increase in raw performance in just six years. Their A100 chips, which were the industry standard in 2020, offered 312 teraflops of performance; the new Blackwell architecture delivers 2,250 teraflops. Complementing this, Microsoft has introduced its own custom silicon, the Maia 200, which is designed specifically for AI inference, offering 30% better performance-per-dollar than existing third-party hardware.

The second vector involves solving the "memory wall"—the bottleneck that occurs when processors sit idle waiting for data to arrive. This has been addressed through High Bandwidth Memory (HBM), specifically HBM3, which utilizes vertical chip stacking to triple data bandwidth compared to previous generations. By stacking chips like skyscrapers, engineers can feed data to processors fast enough to ensure they are engaged in active computation nearly 100% of the time.

Finally, the third vector is the transition from individual servers to warehouse-scale supercomputers. Technologies such as NVLink and InfiniBand allow for the interconnection of hundreds of thousands of GPUs, enabling them to function as a single, massive cognitive entity. This networking capability has transformed AI training from a localized task into a distributed global engineering feat, allowing for clusters that were considered technologically impossible only five years ago.

A Chronology of the Compute Revolution

To understand the scale of this transformation, one must look at the timeline of deep learning, which began in earnest in 2012.

- 2012: The AlexNet Breakthrough. The modern era of AI was ignited when the AlexNet image recognition model was trained on just two GPUs. This proved that neural networks could outperform traditional algorithms if given sufficient compute.

- 2017: The Transformer Era. The introduction of the Transformer architecture by Google researchers provided a new way for models to process data in parallel, setting the stage for massive scaling.

- 2020: The Scaling Hypothesis. With the release of GPT-3, it became clear that increasing compute and data led to predictable improvements in intelligence. At this time, training a language model on eight GPUs took approximately 167 minutes.

- 2024: The Industrial Scale. Today, equivalent training tasks take under four minutes on modern hardware. While Moore’s Law would have predicted a 5x improvement in this window, the industry has achieved a 50x improvement through combined hardware and software gains.

- 2027–2030: The Era of Cognitive Abundance. Industry forecasts suggest that by 2027, the global supply of AI compute will reach 100 million H100-equivalent GPUs. By 2030, the infrastructure may require up to 200 gigawatts of power—a figure comparable to the combined peak energy consumption of Italy, Germany, France, and the United Kingdom.

Software Efficiency and the Collapse of Costs

While hardware gains are the most visible aspect of the AI revolution, software optimization is moving at an even faster pace. Research from Epoch AI indicates that the amount of compute required to reach a specific performance threshold halves every eight months. This is significantly faster than the 18-to-24-month doubling cycle of traditional hardware laws.

The economic implications of these efficiencies are transformative. For some recent models, the cost of deployment and "serving" the AI to users has collapsed by a factor of 900 on an annualized basis. This radical reduction in cost suggests that AI is transitioning from an expensive, specialized tool into a ubiquitous utility. As the cost per query drops, the barriers to integrating high-level intelligence into every facet of digital life—from search engines to enterprise resource planning—virtually disappear.

Addressing the Energy and Sustainability Challenge

The primary criticism of this exponential growth is its environmental and logistical footprint. A single AI rack can consume 120 kilowatts of power, which is equivalent to the energy needs of approximately 100 homes. The projected 200-gigawatt requirement by 2030 presents a massive challenge for global power grids.

However, proponents of the "compute explosion" argue that this energy demand is colliding with another set of exponential trends: the falling costs of renewable energy. Over the last 50 years, the cost of solar photovoltaic energy has dropped by a factor of nearly 100. Simultaneously, battery storage prices have decreased by 97% over the last three decades. This suggests a pathway where the massive energy requirements of AI clusters could drive a faster transition to green energy infrastructure, as tech giants invest heavily in nuclear, solar, and wind projects to secure their own supply chains. Microsoft, for instance, has already made significant commitments to becoming carbon negative by 2030, even as its AI infrastructure expands.

From Chatbots to Semiautonomous Agents

The ultimate goal of this compute ramp is a shift in the fundamental nature of artificial intelligence. The current era is defined by "chatbots"—systems that respond to prompts with text or images. The next phase, enabled by the 1,000x increase in effective compute expected by 2028, will see the rise of "human-level agents."

These agents are envisioned as semiautonomous systems capable of sustained, complex labor. Unlike a chatbot that answers a single question, an AI agent could manage a project for several months, write and debug code autonomously, negotiate contracts, or oversee complex logistics chains. This transition marks the move from "AI as an assistant" to "AI as a workforce." Suleyman and other industry leaders believe this will lead to "cognitive abundance," where the marginal cost of high-level problem-solving approaches zero.

Analysis of Broader Implications and Industry Impact

The implications of this shift extend far beyond the technology sector. If cognitive labor becomes abundant and inexpensive, every industry built on information processing—law, finance, medicine, and education—will undergo a structural transformation.

In the legal field, agents could perform discovery and contract analysis at a scale and speed unattainable by human teams. In medicine, AI could simulate millions of protein folds or drug interactions in days rather than years. However, this "cognitive abundance" also raises significant questions regarding labor displacement and the economic value of human expertise. If an AI "team" can deliberate and execute projects with minimal human intervention, the traditional structures of corporate hierarchy and professional services may become obsolete.

The capital is already being deployed to realize this vision. Projects involving $100 billion data center clusters and 10-gigawatt power draws are no longer theoretical; ground is being broken for these facilities today. While skeptics continue to predict a "wall" in AI development due to data scarcity or energy limits, the data suggests that the combined forces of hardware innovation, software efficiency, and capital investment are successfully navigating these obstacles.

The compute explosion is not a temporary bubble but the foundational technological story of the 21st century. As the world moves toward 2030, the divide between those who view the world linearly and those who understand exponential trends will define the economic and geopolitical winners of the new era. In the words of Suleyman, we are currently only in the "foothills" of this transition. The true peak of the compute revolution remains on the horizon, promising a world where intelligence is no longer a scarce resource but a fundamental, abundant utility.