Docker for Python and Data Projects: A Beginner’s Guide

The global software development landscape has undergone a seismic shift toward containerization, a movement largely driven by the need for consistency across increasingly complex computing environments. Python, currently one of the most widely used programming languages according to the TIOBE Index and Stack Overflow Developer Surveys, has become the backbone of modern data science, machine learning, and artificial intelligence. However, the flexibility of Python often introduces significant "dependency debt." Discrepancies between local development environments, testing servers, and production clouds frequently lead to the "it works on my machine" phenomenon, a bottleneck that costs enterprises billions in lost productivity and delayed deployments.

Docker has emerged as the industry-standard solution to this volatility. By packaging code, runtime, system tools, and libraries into a standardized unit called a container, Docker ensures that applications behave identically regardless of the underlying infrastructure. For data professionals, this technology is no longer optional; it is a foundational requirement for reproducible research and scalable production pipelines.

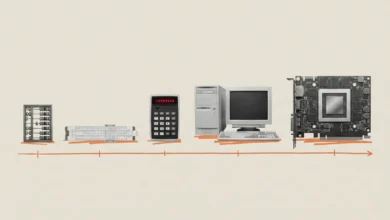

The Evolution of the Containerization Paradigm

The history of containerization predates Docker, with technologies like chroot, FreeBSD Jails, and Solaris Zones providing early isolation. However, it was the 2013 launch of Docker by Solomon Hykes and the team at dotCloud that democratized the technology. By introducing an easy-to-use CLI and a central repository (Docker Hub), Docker transitioned containers from a niche Linux kernel feature into a mainstream development tool.

In the context of data science, the need for Docker is particularly acute. A typical data project involves a specific version of Python, numerous third-party libraries like Pandas or Scikit-learn, and often system-level dependencies like C++ compilers or CUDA drivers for GPU acceleration. Managing these through traditional virtual environments (venv or conda) is often insufficient when moving code between different operating systems. Docker addresses this by creating a lightweight, standalone executable package of software that includes everything needed to run it.

Core Architecture: Images and Containers

To master Docker for data projects, one must distinguish between the "image" and the "container." An image is a read-only template that contains the instructions for creating a Docker container. It is built using a text file called a Dockerfile. A container is a runnable instance of an image.

Industry data suggests that the adoption of containerization has led to a 78% increase in the frequency of software releases among high-performing DevOps teams. For data scientists, this translates to faster iteration on models and more reliable deployment of analytical scripts.

Case Study 1: Standardizing Data Cleaning Scripts

The most fundamental use case for Docker in data science is the execution of a standalone script. Consider a data cleaning process that utilizes Pandas to ingest raw CSV data, perform deduplication, and handle missing values.

Project Organization and Version Pinning

A professional data project requires a structured directory:

Dockerfile: The blueprint for the environment.requirements.txt: A list of pinned dependencies.clean_data.py: The logic for data processing.data/: A local directory for raw and processed assets.

Version pinning is a critical best practice. Instead of listing pandas in a requirements file, developers should specify pandas==2.2.0. This prevents "silent failures" where a library update changes a function’s behavior, leading to inconsistent data processing results.

The Dockerfile Build Process

A typical Dockerfile for a Python script uses a "slim" base image to minimize the attack surface and reduce storage requirements. Using python:3.11-slim provides a full Python 3.11 environment but removes many unnecessary packages found in the standard image.

The build process follows a layered architecture. By copying the requirements.txt file and running pip install before copying the rest of the application code, developers leverage Docker’s layer caching. If the script changes but the requirements do not, Docker reuses the cached library layer, reducing build times from minutes to seconds.

Case Study 2: Serving Machine Learning Models via FastAPI

Modern data science often requires exposing trained models as web services. This is typically achieved through an Application Programming Interface (API). FastAPI has become the preferred framework for this task due to its high performance and native support for asynchronous programming.

Input Validation and Health Checks

When serving a model, data integrity is paramount. FastAPI utilizes Pydantic to enforce type hints. If a model expects a floating-point number for "marketing_spend" and receives a string, the API will automatically reject the request with a 422 Unprocessable Entity error. This prevents malformed data from reaching the model and causing runtime exceptions.

Furthermore, professional deployments include "health check" endpoints. A simple /health route allows orchestrators like Kubernetes or AWS ECS to monitor the container’s status. If the container becomes unresponsive, the orchestrator can automatically restart it, ensuring high availability.

Self-Contained Model Deployment

In this scenario, the machine learning model (e.g., a model.pkl file) is "baked" into the Docker image. This creates a portable artifact that contains both the logic and the intelligence required for prediction. When the container starts, it initiates a Uvicorn server, which listens on all network interfaces (0.0.0.0) to allow communication from the host machine or other services.

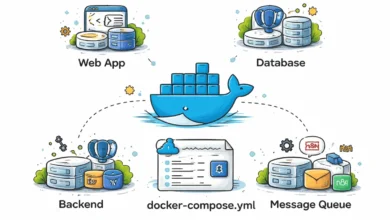

Case Study 3: Multi-Service Pipelines with Docker Compose

Real-world data applications are rarely monolithic. They often consist of a database, a data ingestion service, and a visualization dashboard. Docker Compose is a tool used to define and run multi-container applications using a YAML file.

Orchestrating Connectivity

In a multi-service pipeline, containers communicate over a dedicated virtual network. Docker Compose handles DNS resolution, allowing a "loader" script to connect to a "db" service simply by using the hostname db.

A common challenge in these setups is the "race condition," where a script tries to connect to a database before the database is fully initialized. To mitigate this, Docker Compose supports health checks and the depends_on condition. By configuring the loader to wait until the database is "healthy," developers ensure the stability of the entire pipeline.

Persistence and Data Volumes

By default, data inside a container is ephemeral; it disappears when the container is deleted. For databases like PostgreSQL, Docker "volumes" are used to persist data on the host machine’s disk. This allows developers to stop, start, and upgrade database containers without losing the underlying data.

Case Study 4: Automated Scheduling and Cron Jobs

Data science often involves recurring tasks, such as fetching daily sales figures from an external API. While tools like Apache Airflow provide robust orchestration, they are often overkill for simple tasks. A "cron container" offers a lightweight alternative.

A cron container runs a background daemon that executes scripts at specified intervals. To function correctly within Docker, the cron process must run in the foreground (cron -f). This is because a Docker container exists only as long as its primary process is active. If cron were to run as a background daemon, the container would immediately exit.

Industry Implications and Strategic Analysis

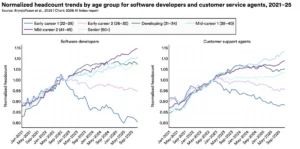

The shift toward Docker-based data workflows has profound implications for "DataOps," a collaborative data management practice focused on improving communication, integration, and automation of data flows.

- Reproducibility: Scientific journals and enterprise audit teams increasingly demand reproducible environments. Docker provides a cryptographic hash of the image, ensuring that a model run today will produce the same results five years from now.

- Scalability: Containers are the atomic unit of the cloud. By containerizing a Python script, a company can easily scale from one instance to one thousand instances using cloud-native tools.

- Security: Docker provides a layer of isolation between the application and the host operating system. By using non-root users and slim images, organizations can significantly reduce their vulnerability to cyber threats.

Chronology of Integration

- 2013: Docker is released, revolutionizing the way software is packaged.

- 2014: The Kubernetes project is launched, providing a way to manage containers at scale.

- 2015-2017: Major data libraries like TensorFlow and PyTorch begin offering official Docker images, signaling a shift in the data science community.

- 2020-Present: "Container-first" development becomes the standard for cloud-native data platforms like Databricks, Snowflake, and Google Cloud Vertex AI.

Conclusion: When to Adopt Containerization

While Docker offers immense benefits, it is not always the correct tool for every task. For quick exploratory data analysis (EDA) in a local Jupyter Notebook or simple scripts with no external dependencies, the overhead of Docker may outweigh the benefits. However, for any project destined for collaboration or production, Docker is essential.

As the data industry continues to mature, the barrier between "data science" and "software engineering" continues to blur. Mastering Docker allows data professionals to bridge this gap, ensuring that their insights move seamlessly from a local experiment to a global scale. By adopting the patterns of pinned dependencies, layered builds, and orchestrated services, developers can eliminate the friction of environment management and focus on the core value: extracting intelligence from data.