AWS Launches Claude Opus 4.7 on Amazon Bedrock to Drive Advanced Enterprise Automation and Intelligent Reasoning

Amazon Web Services has announced the general availability of Claude Opus 4.7, the latest and most sophisticated large language model from AI safety and research company Anthropic, on the Amazon Bedrock platform. This release represents a significant milestone in the ongoing partnership between the cloud computing giant and the AI pioneer, aimed at providing enterprise customers with the tools necessary to build and scale highly intelligent, agentic applications. Claude Opus 4.7 is designed to surpass its predecessors in critical areas such as complex software engineering, long-running autonomous agents, and high-level professional knowledge work.

The integration of Claude Opus 4.7 into Amazon Bedrock leverages a next-generation inference engine specifically engineered to handle the rigorous demands of production-grade workloads. This infrastructure enhancement introduces sophisticated scheduling and scaling logic that dynamically manages compute capacity. By optimizing resource allocation, the engine ensures high availability for steady-state enterprise operations while simultaneously maintaining the elasticity required to accommodate sudden spikes in demand from rapidly scaling services.

A New Benchmark for Enterprise Intelligence

The introduction of Claude Opus 4.7 marks a strategic evolution in the Claude model family. While previous iterations established benchmarks for speed and safety, Opus 4.7 focuses on depth of reasoning and the ability to navigate ambiguity. According to performance data shared by Anthropic, the model demonstrates marked improvements in workflows that require sustained logical consistency, such as agentic coding and multi-step visual understanding.

One of the standout features of this model is its enhanced ability to follow complex, multi-layered instructions. In production environments where AI is tasked with autonomous decision-making—often referred to as "agentic" behavior—precision is paramount. Opus 4.7 is engineered to be more thorough in its problem-solving approach, reducing the likelihood of hallucinations or logical shortcuts that can occur in less advanced models. This makes it particularly suitable for industries such as finance, legal services, and healthcare, where accuracy and nuance are non-negotiable.

Technical Evolution and the Next-Generation Inference Engine

The deployment of Claude Opus 4.7 on Amazon Bedrock is supported by a fundamental architectural shift in how AWS handles model inference. The "next generation inference engine" mentioned by AWS is designed to solve one of the most persistent challenges in generative AI deployment: balancing cost-efficiency with performance reliability.

Traditional inference models often struggle with "noisy neighbor" effects or unpredictable latency during peak usage. The new Bedrock engine utilizes brand-new scheduling logic that prioritizes consistent throughput for enterprise customers. Furthermore, the architecture emphasizes "zero operator access." This security protocol ensures that customer prompts and model responses remain entirely private. Neither Anthropic nor AWS operators have visibility into the data processed by the model, providing a level of data sovereignty that is critical for organizations operating under strict regulatory frameworks like GDPR or HIPAA.

Adaptive Thinking: Dynamic Resource Allocation

A key innovation accompanying the release of Claude Opus 4.7 is the "Adaptive Thinking" capability. This feature allows the model to dynamically allocate a "thinking token budget" based on the complexity of a given request. In practical terms, this means the model can spend more time "ruminating" on a complex architectural design or a difficult debugging task while remaining concise and rapid for simpler queries.

This approach addresses the "one-size-fits-all" limitation of previous AI models. By allowing the model to determine the necessary depth of reasoning required for a task, developers can achieve a better balance between response quality and operational cost. For complex reasoning tasks, this results in a more thorough analysis; for routine tasks, it ensures that the system remains responsive and efficient.

The Evolution of the AWS and Anthropic Partnership

The launch of Claude Opus 4.7 is the latest chapter in a multi-year strategic collaboration between AWS and Anthropic. This partnership, which includes a total investment of $4 billion from Amazon, positions AWS as the primary cloud provider for Anthropic’s mission-critical workloads. The collaboration is built on the premise of combining Anthropic’s leadership in AI safety and research with the unparalleled scale and reliability of AWS infrastructure.

Historically, the Claude 3 and 3.5 series set high standards for the industry, but the transition to the version 4 series, and specifically the refinement found in 4.7, signals a shift toward "agentic" AI. This refers to AI systems that don’t just answer questions but can execute tasks, interact with other software tools, and manage long-running processes with minimal human intervention. By hosting these models on Bedrock, AWS provides the "plumbing"—the security, API management, and data integration—needed to turn these models into functional business tools.

Developer Integration and Implementation Paths

AWS has streamlined the process for developers to transition to Claude Opus 4.7. The model is accessible through several interfaces, ensuring that both experimental and production-ready teams can integrate the technology quickly.

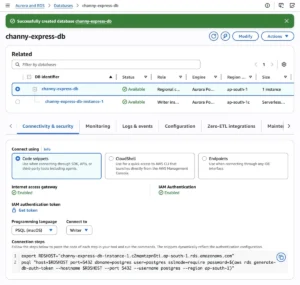

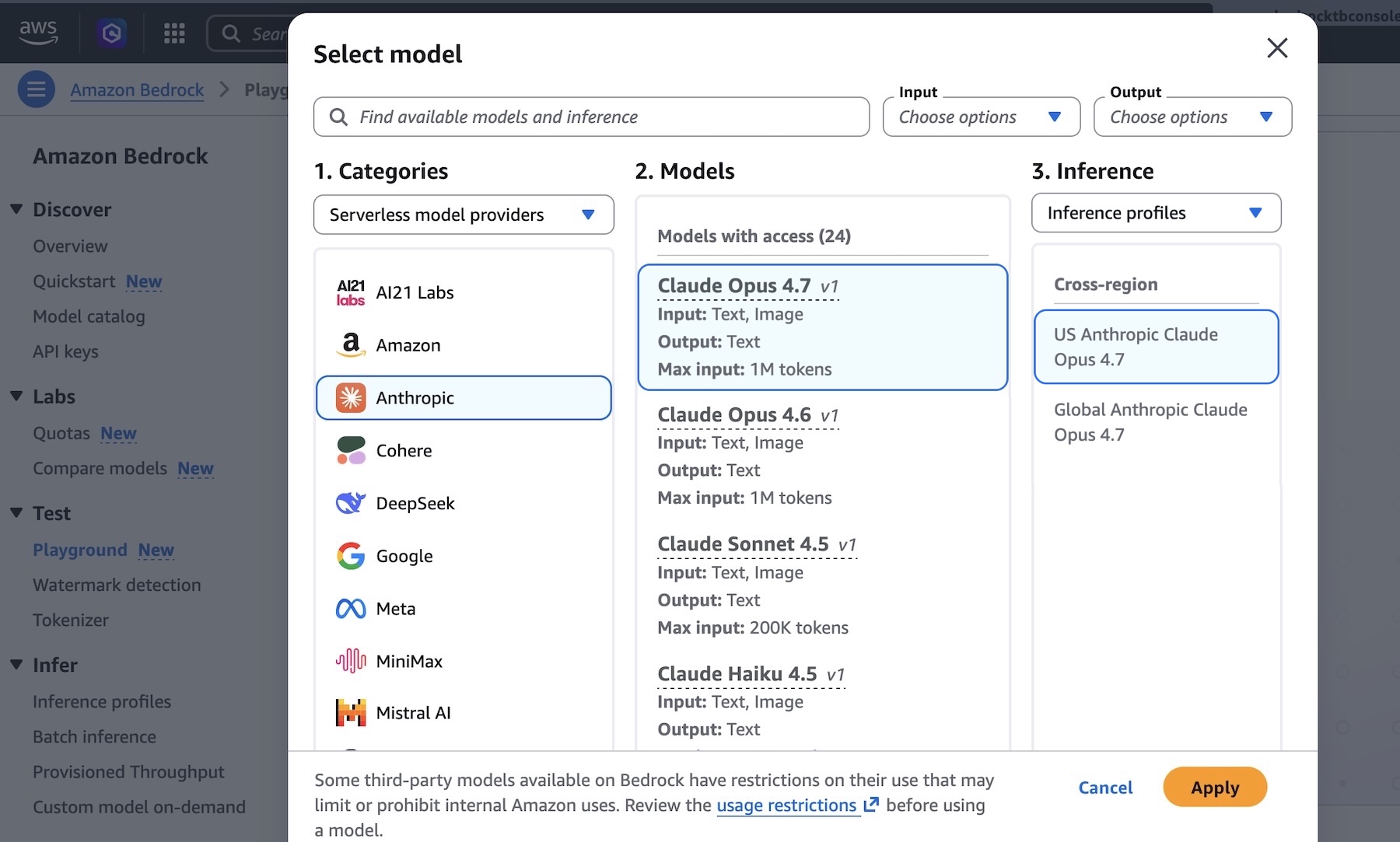

The Amazon Bedrock Console

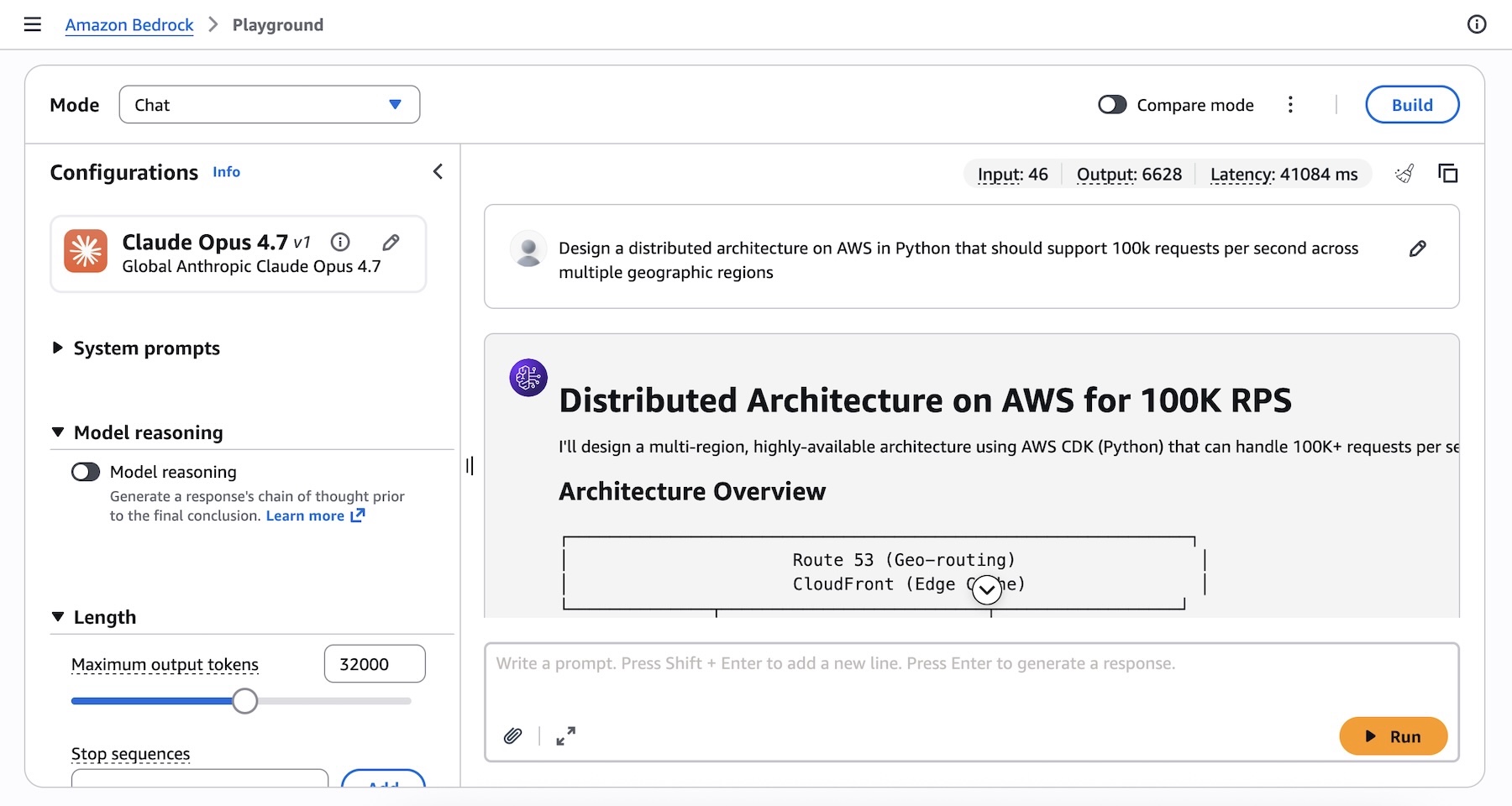

For rapid testing and prototyping, the Amazon Bedrock console offers a "Playground" environment. Users can select Claude Opus 4.7 from the model menu to test prompts and evaluate the model’s reasoning capabilities in real-time. This environment is particularly useful for prompt engineering, as the transition from Opus 4.6 to 4.7 may require subtle adjustments to harness the model’s full potential.

Programmatic Access and SDKs

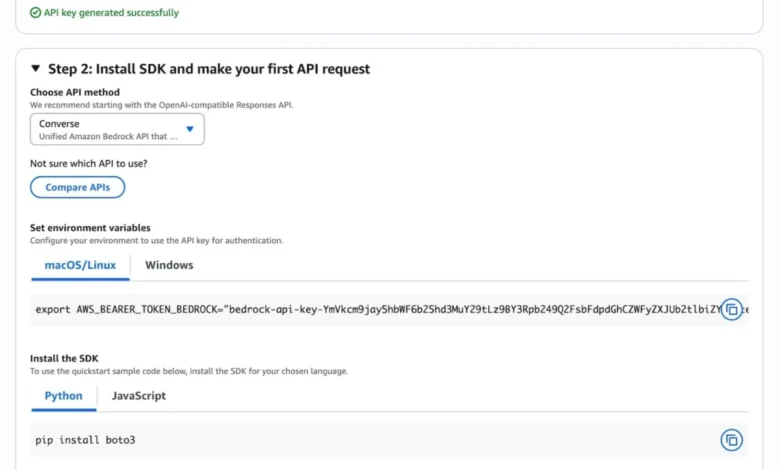

For production environments, AWS supports multiple API integration methods:

- Anthropic Messages API: Developers can call the

bedrock-runtimeusing the Anthropic SDK orbedrock-mantleendpoints. - AWS SDK and CLI: The model supports the standard Invoke and Converse APIs, allowing for integration into existing AWS-based applications using Python, JavaScript, or the AWS Command Line Interface.

- OpenAI-Compatible API: To lower the barrier for migration, Bedrock now offers an OpenAI-compatible Responses API, allowing teams to repurpose existing codebases with minimal modification.

Security and Privacy in the Age of Generative AI

As generative AI becomes more integrated into core business processes, the focus on security has intensified. AWS and Anthropic have addressed these concerns by ensuring that Claude Opus 4.7 on Bedrock adheres to the highest standards of data protection.

The "Zero Operator Access" policy is a cornerstone of this commitment. In many cloud environments, troubleshooting or optimization might occasionally involve human review of anonymized data. AWS has eliminated this for Bedrock’s next-gen engine, ensuring that sensitive corporate data remains within the customer’s controlled environment. Additionally, data used during inference is not used to train the underlying foundation models, protecting the intellectual property of the enterprises using the service.

Global Availability and Regional Rollout

At launch, Claude Opus 4.7 is available in several key AWS regions, reflecting the global demand for advanced AI capabilities. These regions include:

- US East (N. Virginia): Serving the primary North American market.

- Asia Pacific (Tokyo): Supporting the growing AI ecosystem in the APAC region.

- Europe (Ireland and Stockholm): Providing low-latency access and compliance support for European enterprises.

AWS has indicated that further regional expansions are planned, as the infrastructure for the next-generation inference engine continues to be deployed across the global AWS backbone.

Market Analysis and Industry Implications

The release of Claude Opus 4.7 comes at a time of intense competition in the generative AI space. With competitors like OpenAI (backed by Microsoft) and Google constantly updating their flagship models (GPT and Gemini, respectively), the availability of Opus 4.7 on Bedrock is a significant move for AWS to maintain its lead in the cloud infrastructure market.

Industry analysts suggest that the "agentic" capabilities of Opus 4.7 will be the primary driver of adoption. Organizations are moving beyond simple chatbots and looking for AI that can handle "long-running tasks," such as managing a software development lifecycle, conducting deep-dive market research, or automating complex supply chain logistics. The ability of Opus 4.7 to work through ambiguity and follow precise instructions makes it a formidable tool for these high-stakes applications.

Furthermore, the pricing structure on Amazon Bedrock—which includes options for On-Demand, Provisioned Throughput, and Batch inference—allows businesses to scale their AI costs in line with their usage. This flexibility is essential for startups and large enterprises alike as they navigate the transition from AI experimentation to full-scale deployment.

Conclusion and Future Outlook

The addition of Claude Opus 4.7 to Amazon Bedrock represents more than just an incremental update; it is a signal of the maturation of the generative AI market. By focusing on deep reasoning, agentic performance, and enterprise-grade security, AWS and Anthropic are providing the foundation for the next wave of digital transformation.

As organizations begin to integrate Opus 4.7 into their workflows, the focus will likely shift toward "Adaptive Thinking" and how dynamic resource allocation can optimize the cost-to-performance ratio. With the support of the next-generation inference engine and a robust suite of developer tools, Claude Opus 4.7 is positioned to become a central component of the modern enterprise technology stack. AWS encourages customers to begin testing the model in the Bedrock console today, marking a new era of intelligent, autonomous, and secure cloud computing.