The Structural Shift in Enterprise AI How the Operating Layer is Defining the Next Era of Corporate Intelligence and Automation

The landscape of enterprise artificial intelligence is currently experiencing a fundamental shift in its tectonic plates, moving away from the high-profile competition between foundation models toward a more subtle but durable structural advantage: the ownership of the operating layer. While public discourse remains fixated on the "model wars"—tracking the incremental reasoning scores of OpenAI’s GPT, Google’s Gemini, and Anthropic’s Claude—industry practitioners are discovering that the true value of AI in a corporate setting is determined not by the model itself, but by the infrastructure that governs how intelligence is applied, captured, and improved. This shift marks a transition from viewing AI as a generic on-demand utility to treating it as an integrated operating layer that sits between raw computational power and the complex, high-stakes work of a modern organization.

The Commoditization of Foundation Models

In the current market, model providers sell intelligence as a service. This model is characterized by an API-driven approach where an organization presents a problem, calls an external model, and receives a response. While these foundation models are undeniably sophisticated, they remain largely general-purpose and stateless. In a business context, "stateless" means the intelligence does not naturally accumulate or learn from the specific nuances of a company’s daily operations; it essentially resets with every new prompt.

As foundation models reach a level of parity in performance, the intelligence they provide is becoming increasingly interchangeable. For a Fortune 500 company, the ability to access a high-performing LLM is no longer a competitive moat; it is a baseline requirement. The emerging consensus among enterprise strategists is that a durable advantage cannot be built on top of a commodity that is equally available to all competitors. Instead, the distinction that matters is whether an organization’s intelligence is a recurring cost or a compounding asset.

Defining the AI Operating Layer

The "operating layer" represents the combination of operational software, data capture mechanisms, feedback loops, and governance protocols that allow AI to be embedded directly into a company’s workflow. Unlike a standalone chatbot, an operating layer acts as a system of record and a system of intelligence simultaneously. It instruments the organization’s processes so that every action—every approval, every correction, and every exception—generates a signal that can be used to refine the system.

This layer is comprised of several critical components:

- Instrumentation: The ability to track work at a granular level, turning manual processes into machine-readable data.

- Feedback Loops: Mechanisms that capture human expert judgment to correct and guide AI outputs.

- Governance: The framework of permissions, security, and compliance that ensures AI operates within the legal and ethical boundaries of the industry.

- Integration: The deep "plumbing" that connects AI to legacy ERP (Enterprise Resource Planning) and CRM (Customer Relationship Management) systems.

The Historical Evolution of Enterprise Automation

To understand the current shift, it is helpful to view the chronology of enterprise technology over the last three decades:

- 1990s – The Era of ERP: Organizations focused on digitizing records. Systems like SAP and Oracle became the backbone of business, turning paper processes into digital databases.

- 2000s to 2010s – The SaaS and Cloud Revolution: Software moved to the cloud, enabling better collaboration and data accessibility. The focus was on "UI-first" systems where humans used software to perform tasks more efficiently.

- 2022 to 2023 – The Generative AI Explosion: The launch of ChatGPT sparked a rush toward experimental AI pilots. Most organizations focused on "wrappers"—simple interfaces built on top of third-party APIs.

- 2024 to Present – The Shift to AI-Native Operations: Enterprises are moving away from "chat" interfaces toward "agentic" systems. The focus has moved from asking questions to executing work, requiring a deep integration into the operational fabric of the company.

The Inversion: AI Executes, Humans Adjudicate

The traditional architecture of professional services and enterprise work is built on a human-centric model: humans use software as a tool to do expert work. In this legacy setup, human judgment is the primary product, and technology is merely the medium.

An AI-native operating layer inverts this relationship. In an inverted system, the AI platform ingests the initial problem—whether it is a medical billing claim, a legal contract review, or a supply chain disruption—and applies accumulated domain knowledge to execute the task autonomously. When the system encounters an edge case or a situation requiring high-level nuance that it cannot resolve with high confidence, it routes a targeted sub-task to a human expert.

This is not merely a change in user interface; it is a fundamental re-engineering of work. It requires the platform to be built on a foundation of behavioral data and operational knowledge that has been accumulated over years. By having the AI lead the execution, the human moves from being a "manual laborer" of digital data to an "adjudicator" of complex exceptions.

Why Incumbents Hold the Strategic Advantage

A prevailing narrative in Silicon Valley suggests that nimble startups will inevitably out-innovate legacy incumbents by building AI-native platforms from scratch. However, this theory assumes that AI is primarily a "model problem." In the enterprise world, AI is increasingly a "systems problem."

Incumbents who sit inside high-volume, high-stakes operations possess three compounding assets that startups cannot easily replicate:

- Proprietary Domain Data: Large organizations have decades of historical data that reflect how work is actually done, not just how it is described in textbooks.

- Embedded Workflows: They already own the "real estate" where work happens. Replacing a core operational system is a massive undertaking involving change management, security clearances, and complex integrations.

- The Human Feedback Loop: Incumbents employ the very experts whose judgment is required to train and refine AI models.

The advantage accrues to those who can convert their existing position into a learning engine. While a startup may have a better model on day one, an incumbent with an instrumented operating layer can generate thousands of labeled training examples every hour, allowing their system to eventually surpass the "general" intelligence of a startup’s model through specialized domain expertise.

The Learning Flywheel: Converting Decisions into Training Data

The concept of the "learning flywheel" is central to the durable AI advantage. Every time a skilled operator makes a decision within an instrumented platform, they generate more than just a completed task; they generate a high-value training signal.

Consider a large-scale services organization processing 50,000 cases per week. If the platform is designed to capture just three high-quality decision points per case, the organization is effectively generating 150,000 labeled examples weekly. This data stream powers supervised learning and reinforcement learning from human feedback (RLHF), teaching the system to mimic the reasoning of the company’s top performers.

At Ensemble, a leader in healthcare revenue cycle management, this strategy is known as "knowledge distillation." By systematically converting expert judgment into machine-readable signals, the system identifies gaps in its own knowledge, formulates targeted questions for human experts, and synthesizes the answers into a living knowledge base. This ensures that the expertise of a veteran employee does not leave the building when they retire; instead, it is codified into the platform.

Case Study: Transforming Healthcare Revenue Cycles

The impact of this approach is perhaps most visible in complex industries like healthcare. In revenue cycle management, the "work" involves navigating a labyrinth of insurance payer rules, medical codes, and regulatory requirements.

Traditional systems require human operators to manually hunt for errors in claims. An AI-native operating layer, however, can analyze thousands of analogous prior cases to identify the situational reasoning behind a successful claim. When the system encounters a new type of denial from an insurance company, it doesn’t just stop. It captures how a human expert resolves the ambiguity, learns the underlying "policy" behind that resolution, and applies it to all future cases. This results in measurable gains: higher consistency, improved throughput, and the ability for human staff to focus on the most consequential patient-facing issues.

Broader Economic and Operational Implications

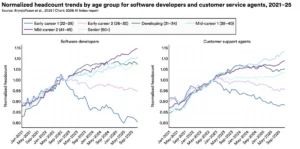

The shift toward the AI operating layer has significant implications for the broader economy and the future of work:

- From Labor Arbitrage to Intellectual Property: For decades, services companies grew by hiring more people (labor arbitrage). In the AI era, growth will come from the ability to scale "digital expertise." The value of a company will be tied to the quality of its "intelligence moat" rather than its headcount.

- The End of "Pilot Purgatory": Many enterprises are stuck in a cycle of endless AI pilots that never reach production. By focusing on the operating layer rather than the model, leaders can move toward infrastructure that provides immediate operational utility.

- The Importance of Data Governance: As systems learn from human decisions, the "provenance" of those decisions becomes critical. Organizations will need to invest heavily in ensuring their "training signals" are unbiased, accurate, and legally compliant.

Conclusion: The Future of Expertise Amplification

The ultimate goal of the enterprise AI transition is expertise amplification. The organizations that thrive will not be those that simply buy the fastest models, but those that understand their own work well enough to instrument it. By building an operating layer that captures, refines, and compounds institutional knowledge, companies can create a quality of execution that neither humans nor AI could achieve independently.

As AI shifts from a technological curiosity to a foundational piece of corporate infrastructure, the most durable edge will belong to the companies that own the "connective tissue" of their operations. In the long run, the winner of the AI race won’t be the one with the best prompt, but the one with the best system for learning from every action the organization takes.