The State of Artificial Intelligence 2026: Stanford University AI Index Reveals a Technology Outpacing Regulation and Human Oversight

The 2026 AI Index, published today by the Stanford Institute for Human-Centered Artificial Intelligence (HAI), offers the most comprehensive assessment to date of a technology that is currently evolving at a velocity that challenges the capacity of global infrastructure, legal frameworks, and the labor market to adapt. As the annual report card for the artificial intelligence industry, the document serves as a critical corrective to the conflicting narratives of "AI whiplash"—the oscillating public perception that views AI simultaneously as an economic savior, a speculative bubble, and an existential threat to employment. The data suggests a reality far more complex: while AI development has not hit the predicted "intelligence wall," the environmental and social costs of maintaining this trajectory are mounting rapidly.

Technical Milestones and the Myth of the Plateau

For much of late 2024 and 2025, industry analysts debated whether the "scaling laws"—the principle that adding more data and more computing power leads to predictably better models—were reaching a point of diminishing returns. The 2026 Index effectively dismantles the plateau theory. According to the report, top-tier models continue to show significant year-over-year improvements in complex reasoning, mathematical problem-solving, and professional-level proficiency.

A primary indicator of this progress is the SWE-bench Verified, a benchmark used to evaluate an AI’s ability to resolve real-world software engineering issues. In 2024, the highest-performing models scored approximately 60%; by late 2025, that figure had surged to nearly 100%. Furthermore, AI systems have moved beyond mere text and image generation into high-stakes predictive modeling. In 2025, an autonomous AI system successfully generated a global weather forecast that rivaled traditional meteorological models, marking a shift from generative assistance to functional autonomy.

However, the report highlights a phenomenon described as "jagged intelligence." While AI models can now pass PhD-level science exams and solve advanced calculus, they remain remarkably deficient in physical-world interaction. Robots, despite advancements in computer vision, still fail at roughly 88% of common household tasks. This disparity highlights a fundamental limitation: AI learns from the digital exhaust of human knowledge rather than embodied experience.

The Geopolitical Landscape: A Narrowing Gap

The 2026 report provides a detailed breakdown of the intense rivalry between the United States and China. For the first time since the emergence of large language models (LLMs), the performance gap between American and Chinese models has effectively closed. The Arena ranking platform, which uses blind A/B testing to evaluate model quality, shows that Chinese models like DeepSeek’s R1 and Alibaba’s latest iterations are now operating on a razor-thin margin behind American leaders such as Anthropic’s Claude and Google’s Gemini.

As of March 2026, the rankings are as follows:

- Anthropic (USA): Leading in reliability and reasoning.

- xAI (USA): Rapidly ascending through massive compute clusters.

- Google (USA): Dominant in multimodal integration.

- OpenAI (USA): Maintaining high performance despite internal restructuring.

- DeepSeek (China): Matching US performance at a significantly lower training cost.

- Alibaba (China): Leading in Asian-language proficiency and logistics integration.

The index notes that while the US maintains an advantage in capital investment and the sheer number of data centers—hosting 5,427 facilities, more than ten times its nearest competitor—China has taken the lead in the "upstream" elements of AI. China currently produces more AI research publications and patents than any other nation and dominates the field of industrial robotics.

The Environmental and Infrastructure Toll

One of the most sobering sections of the 2026 Index concerns the physical resources required to sustain the AI boom. The energy consumption of AI data centers has reached a global draw of 29.6 gigawatts. To put this in perspective, this is enough electricity to power the entire state of New York during its peak summer demand.

The water requirements for cooling these facilities are equally staggering. The report issued a correction to previous estimates, clarifying that the annual water consumption required to run OpenAI’s GPT-4o alone is estimated to exceed the drinking water needs of 1.2 million people. As companies move toward even larger models, the strain on municipal water supplies and regional power grids has become a point of contention between tech giants and local governments.

Furthermore, the report identifies an "alarmingly fragile" supply chain. While the US manages the data centers, the fabrication of almost every leading AI chip remains concentrated with a single company: Taiwan Semiconductor Manufacturing Company (TSMC). Any disruption in the Taiwan Strait or a shift in trade policy could effectively halt the global AI trajectory overnight.

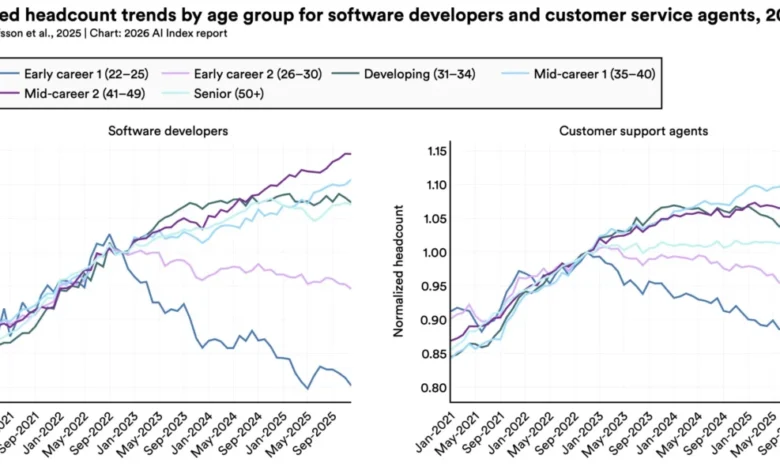

Labor Market Disruptions: The "Canaries in the Coal Mine"

The economic impact of AI is no longer a theoretical projection. The 2026 Index cites a landmark 2025 study from Stanford economists titled "Canaries in the Coal Mine," which examined the job market for entry-level professionals. The findings are stark: employment for software developers aged 22 to 25 has plummeted by nearly 20% since 2022.

While broader macroeconomic factors contribute to this decline, the report suggests that AI is the primary catalyst. Companies are increasingly using AI to handle the "junior-level" tasks—such as boilerplate coding, debugging, and basic documentation—that were traditionally used to train new hires. This has created a "missing rung" on the career ladder, where senior developers become more productive through AI, but fewer junior roles are created to replace them.

Key labor statistics highlighted in the report include:

- Productivity Gains: 26% increase in software development speed and 14% increase in customer service resolution rates.

- Workforce Reduction: 33% of organizations surveyed by McKinsey & Company expect AI to lead to a net reduction in their workforce in 2026.

- Adoption Rates: 88% of global organizations have integrated AI into at least one business function, with 80% of university students using AI for academic assistance.

A Crisis of Transparency and Testing

As the stakes of AI development rise, the industry is becoming increasingly opaque. The 2026 Index notes a troubling trend: leading AI labs like OpenAI, Google, and Anthropic have largely ceased disclosing their training datasets, parameter counts, and safety testing protocols.

Yolanda Gil, a computer scientist at the University of Southern California and coauthor of the report, expressed concern over this "black box" approach. "We don’t know a lot of things about predicting model behaviors," Gil stated, noting that the lack of transparency makes it nearly impossible for independent researchers to verify safety claims or study the long-term societal impacts of these models.

Compounding this issue is the fact that AI benchmarks are "broken." Many of the tests used to measure AI progress are now being "gamed" by developers who include benchmark questions in the models’ training data. Furthermore, because AI is now used for complex, interactive tasks like "AI Agents" that can control a computer, the old static tests are no longer relevant. As models "blow past their ceilings," the industry is left without a standardized way to measure what these systems can actually do.

The Regulatory Tug-of-War

The year 2025 marked a significant turning point in the governance of AI. Globally, there was a flurry of legislative activity, though the approaches varied wildly by region.

Chronology of Key Regulatory Events (2024-2025):

- Late 2024: The European Union’s AI Act enters its first phase of enforcement, banning AI for predictive policing and emotion recognition in workplaces.

- Early 2025: Japan and South Korea pass national frameworks focusing on "Human-Centric AI," prioritizing ethical guidelines over hard prohibitions.

- Mid-2025: The United States federal government issues an executive order aimed at deregulation, specifically attempting to limit the power of individual states to impose safety mandates on AI developers.

- Late 2025: Despite federal pushback, US state legislatures pass a record 150 AI-related bills. California’s SB 53 and New York’s RAISE Act emerge as the most stringent, requiring whistleblower protections and mandatory reporting of "critical safety incidents."

The Stanford report suggests that this regulatory fragmentation is creating a "compliance nightmare" for tech companies while failing to provide a cohesive safety net for the public.

Public Perception: Optimism Tempered by Anxiety

The 2026 Index concludes with an analysis of public sentiment, revealing a deep "optimism gap" between AI experts and the general public. According to data from Pew Research and Ipsos, 73% of AI experts believe the technology will have a positive impact on the labor market over the next 20 years. In contrast, only 23% of the American public shares that view.

This skepticism extends to governance. Americans currently trust their government less than any other surveyed population to regulate AI appropriately. While 59% of global respondents believe AI will provide more benefits than drawbacks, 52% admit the technology makes them "nervous."

The Stanford AI Index 2026 paints a picture of a world in the midst of a profound technological transition. AI is no longer a future prospect; it is a massive, resource-intensive reality that is currently reshaping the economy and the environment faster than our social and legal systems can respond. As the report suggests, the technology is sprinting toward an uncertain finish line, and the rest of the world is still struggling to catch its breath.