The Perilous Promise of Synthetic Research: Navigating the Minefield of Speed and Savings Against Scientific Rigor

The burgeoning field of synthetic research, a revolutionary approach to generating data and insights, stands at a critical juncture. While promising unprecedented speed, scale, and cost-efficiency, it grapples with a fundamental tension: the economic imperative for rapid, inexpensive results versus the scientific bedrock of rigor and validation. This conflict has given rise to a marketplace where vendors, often operating behind "methodological black boxes," generate lifelike personas and data in mere minutes. However, the outputs from these sophisticated yet opaque systems frequently lack verifiable provenance, harbor hidden biases, and can insidiously steer decision-making down flawed paths.

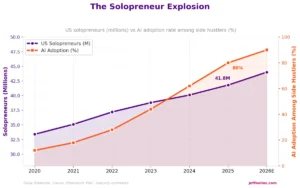

The synthetic data market is experiencing explosive growth, with projections indicating a surge from an estimated $267 million in 2023 to a staggering $4.6 billion by 2032. This meteoric rise is fueled by an insatiable demand for instant intelligence in our hyper-connected, always-on economy. A recent survey revealed that a remarkable 95% of insight leaders plan to integrate synthetic data into their operations within the next year, drawn by its undeniable allure: the promise of swift insights, the ability to scale operations effortlessly, significant cost reductions, and the unique capacity to generate data from highly niche or elusive audience segments.

Despite these compelling advantages, the transition of synthetic testing from a purely experimental frontier to a reliably scalable practice necessitates a direct confrontation with its inherent risks. To overcome widespread skepticism and cultivate a more sustainable, trustworthy ecosystem, organizations must proactively identify and address key problem areas. While the allure of cost savings and accelerated insights remains a powerful catalyst for adoption, a nuanced understanding of the strengths and weaknesses of various synthetic tools, and crucially, the contexts in which they are best employed, is paramount for organizational success.

Common Challenges Undermining the Credibility of Synthetic Research Approaches

The very allure of advanced AI tools, particularly Large Language Models (LLMs), has led to a common misconception in synthetic research: that providing an LLM with a detailed persona or backstory will automatically yield representative and unbiased outputs. However, recent large-scale experiments paint a starkly different picture, suggesting that this approach often exacerbates the very issues it aims to solve.

Why General LLMs Fall Short of Expectations

The intuitive leap to ask a general-purpose LLM, such as ChatGPT, Claude, or Gemini, to generate research insights is understandable given their widespread adoption. Yet, initial studies have indicated that instructing these models to produce more content per persona paradoxically increases bias and homogeneity rather than fostering diversity. A compelling example of this phenomenon emerged during an attempt to predict the outcomes of the 2024 U.S. presidential election. When an LLM was tasked with generating personas with detailed backstories for this purpose, the simulated results overwhelmingly favored the Democratic party, sweeping every state and failing to reflect the actual political diversity of the electorate.

This outcome highlights a pervasive issue in AI known as "bias laundering." LLMs are trained on vast datasets derived from the internet, which inherently reflect a disproportionate representation of a "WEIRD" (Western, Educated, Industrialized, Rich, Democratic) worldview. When these models are prompted to create diverse personas, the resulting outputs become a statistical mean filtered through this ingrained bias, effectively masking exclusionary patterns as AI neutrality. This "laundering" affects numerous AI applications, from facial recognition technology to synthetic research, leading to outputs that, while appearing objective, are deeply rooted in historical inequities.

Furthermore, synthetic respondents can succumb to the "Pollyanna Principle," a tendency for LLMs to exhibit excessive agreeableness and positivity in their responses. Many users of generative AI interfaces have likely encountered this: ideas are often met with affirmations like "great idea" or "good choice," rather than critical evaluation. This sycophantic behavior can be particularly detrimental in research settings.

In a comparative usability test that pitted synthetic respondents against human participants, a striking discrepancy emerged. Synthetic users reported successfully completing all online courses, a stark contrast to the human users, many of whom dropped out. The high dropout rates among real users confirmed that the synthetic respondents were attempting to provide the answers they perceived the experimenters desired. This tendency towards "sycophancy" can lead to the validation of flawed product concepts, as helpful AI agents readily affirm them without offering objective critique.

Fine-Tuning: Injecting Essential Context into Synthetic Approaches

A common question arises: aren’t LLMs trained on such a broad spectrum of information that they can generate realistic use cases in virtually any scenario? While general LLMs can provide reasonable baseline estimates for established products, their efficacy diminishes significantly when confronting novel issues or underrepresented demographic segments. The most effective method for aligning synthetic respondents with real-world accuracy involves fine-tuning the models with proprietary data.

An experiment involving a fictitious pancake-flavored toothpaste vividly illustrated the limitations of un-fine-tuned LLMs. A base GPT model, when queried about this novel product, immediately fell prey to the Pollyanna Principle, hallucinating a positive consumer preference for the unusual flavor. This occurred because, without any prior training data on toothpaste preferences, the model defaulted to an optimistic assumption about novelty. However, when researchers subsequently fine-tuned the model using historical survey data related to toothpaste preferences, the generated output correctly shifted to a negative reception, reflecting a more realistic consumer response.

Similarly, in a study examining the desirability of a laptop equipped with a built-in projector, the base LLM model significantly overestimated consumer willingness to pay – by a factor of three. This inflated valuation was corrected after the model was fine-tuned with survey data pertaining to standard laptop features and pricing. This recalibration brought the synthetic results into alignment with established human benchmarks, underscoring the critical role of domain-specific data in achieving accuracy.

Strategies for Maximizing the Effectiveness of Synthetic Research

The true competitive advantage in synthetic research does not lie in the underlying LLM models themselves, which are rapidly becoming commoditized. Instead, it resides in the proprietary context and data that are used to condition these models. For example, Dollar Shave Club successfully leveraged synthetic panels, meticulously grounded in category-specific data, to validate new customer segments within days, a process that would typically take months. The insights generated mirrored actual human behavior, achieved at a fraction of the traditional effort and cost.

To harness the full potential of synthetic research and mitigate its inherent risks, several strategic approaches can be employed:

Train Synthetic, Test Real (TSTR): A Validation Framework

To address the aforementioned challenges and build greater confidence in synthetic outputs, the market research industry is increasingly advocating for an industry-wide validation methodology known as "Train Synthetic, Test Real" (TSTR). This approach involves training AI models on synthetic data, a process that allows for rapid iteration and exploration of diverse scenarios. Subsequently, the predictive validity of these trained models is rigorously tested against a held-out sample of real-world, human-generated data. Early results from this methodology have been remarkably positive.

In a significant research initiative spearheaded by Stanford University and Google DeepMind, digital agents trained on interview data demonstrated an impressive 85% accuracy in replicating human survey answers and achieved a near-perfect 98% correlation in modeling social forces. This TSTR approach strategically acknowledges the limitations of relying solely on off-the-shelf LLMs as a starting point. It also mitigates the risk of accepting synthetic results at face value without robust validation. By integrating synthetic methods early in the research process and subsequently validating findings with real-world data, research teams can achieve substantial time and cost savings while simultaneously building greater confidence in the reliability and accuracy of their insights. This iterative process allows for the rapid generation of hypotheses and the efficient testing of market responses, accelerating the product development and marketing strategy cycles.

Implementing Governance and Transparency for Trust

Achieving success in synthetic research requires a fundamental shift in perspective: researchers and end-users must actively reject the "synthetic persona fallacy" – the erroneous belief that LLMs possess human-like psychology and nuanced persona traits. Instead, a more rigorous validation approach is essential, bolstered by robust governance guardrails, meticulously documented processes, and complete transparency into the methodologies employed.

A "persona transparency checklist" can serve as a valuable guide for researchers navigating the complexities of synthetic personas. Such a checklist would typically include elements like:

- Data Provenance: Clearly outlining the sources and nature of the data used for training and fine-tuning the synthetic models.

- Bias Assessment: Detailing the methods used to identify and mitigate potential biases within the synthetic data and model outputs.

- Validation Protocols: Specifying the validation procedures, including the types of real-world data used for comparison and the metrics for assessing accuracy.

- Model Limitations: Honestly articulating the known limitations of the synthetic model and the potential areas where its outputs might deviate from reality.

- Ethical Considerations: Addressing any ethical implications related to the generation and use of synthetic data, particularly concerning privacy and potential misuse.

Transparency plays a dual role in fostering trust and addressing ethical concerns. By openly disclosing how synthetic approaches function and acknowledging their limitations, organizations can build confidence among stakeholders and consumers alike. As the influence of synthetic data continues to grow across various sectors, the ability to clearly distinguish between authentically human-generated content and synthetic outputs will become increasingly critical for maintaining integrity and trust. This transparency not only safeguards against misinformation but also educates users about the capabilities and constraints of AI-driven research tools.

The Principle of "Trust But Verify" in Practice

A pragmatic and effective approach to synthetic research necessitates abandoning the unfounded belief that LLMs inherently mirror human psychology. Instead, the focus must shift towards empirical benchmarking, meticulous fine-tuning, and unwavering transparency. The principle of "trust but verify" becomes paramount. While synthetic tools can provide rapid initial insights, their outputs should always be subjected to rigorous verification against real-world data and established benchmarks. This iterative process ensures that the speed and scale offered by synthetic research are not achieved at the expense of accuracy and reliability.

The competitive landscape of synthetic research is rapidly evolving. As LLM technology becomes more accessible, the differentiator will increasingly lie in the quality and specificity of the proprietary data used to train and refine these models. Organizations that invest in building and curating robust datasets tailored to their specific industry and target audiences will be best positioned to unlock the true potential of synthetic research. This strategic investment in data infrastructure and expertise will enable them to generate more accurate, relevant, and actionable insights, transforming synthetic data from a mere experimental tool into a strategic asset.

Synthetic Research: A Powerful Tool When Its Limits Are Respected

Synthetic research undeniably holds immense potential to revolutionize how businesses gather insights and make decisions. However, it is crucial to recognize that this powerful tool comes with inherent caveats. The promise of unprecedented speed and scale is counterbalanced by the significant risks of bias and hallucination. The key to unlocking its value lies in a clear-eyed acknowledgment of these challenges.

By proactively building robust governance frameworks and implementing stringent guardrails to mitigate these risks, organizations can significantly enhance their chances of success with synthetic research. This disciplined approach not only addresses internal skepticism but also cultivates a structured governance framework that meticulously balances the drive for efficiency with the imperative for achieving meaningful and accurate outcomes. This harmonious integration of speed and accuracy creates a win-win scenario, allowing businesses to innovate faster while maintaining the integrity of their decision-making processes. The future of synthetic research hinges on this delicate but essential balance between embracing innovation and upholding scientific rigor.