The agent tier: Rethinking runtime architecture for context-driven enterprise workflows

In the current technological climate, the assumption that every possible scenario can be anticipated and expressed through binary logic is no longer tenable. As processes are forced to respond to broader circumstances—ranging from subtle fraud signals to fluctuating regulatory standards—the traditional branching workflow begins to strain. This architectural tension is most visible in high-stakes environments like banking, where the intersection of digital channels, fraud detection, and revenue goals creates a web of conflicting requirements that deterministic systems struggle to untangle.

The Crisis of Complexity in Enterprise Workflows

The fundamental problem facing modern enterprise architecture is not a lack of rules, but the inability to express contextual judgment within a static structure. In a traditional deterministic model, system behavior is specified in advance, making outcomes predictable but also inflexible. When a new requirement arises, such as a new anti-money laundering (AML) check or a fraud prevention measure, engineering teams typically add a new conditional branch to the existing workflow.

Over time, this cumulative addition of rules leads to what architects call "logic bloat." At a major North American bank, internal digital account opening initiatives revealed that cross-functional design sessions often became battlegrounds for competing priorities. Product teams advocated for reduced friction to improve conversion rates, which are currently estimated to hover between 40% and 60% abandonment in the banking sector. Simultaneously, fraud teams demanded additional safeguards to combat bot-driven account creation and sophisticated mule schemes, while compliance officers insisted on absolute adherence to Know Your Customer (KYC) standards.

Individually, each team’s requirements were rational and necessary. Collectively, however, they transformed the digital onboarding workflow into a fragile, hyper-complex web. The result was a system that collected information in bulk rather than adapting to the specific facts of a case. This "all-or-nothing" approach to data collection creates a binary risk: collect too little data and the institution faces regulatory exposure; collect too much, and the customer abandons the journey out of frustration.

A Chronology of Workflow Evolution

To understand why current architectures are failing, it is necessary to examine the evolution of business process management (BPM) over the last three decades:

- The 1990s (Hard-Coded Era): Business logic was deeply embedded in monolithic applications. Changes required extensive code rewrites and long deployment cycles.

- The 2000s (BPMN and Rule Engines): The industry shifted toward Business Process Model and Notation (BPMN), attempting to separate logic from code. While this improved visibility, it still relied on predefined, static paths.

- The 2010s (Microservices and API Orchestration): Logic became dispersed across various APIs and Single Page Applications (SPAs). While this increased scalability, it decentralized the decision-making process, making it harder to manage end-to-end context.

- The 2020s (The Agentic Shift): The rise of artificial intelligence and machine learning introduced probabilistic reasoning. Enterprises now face the challenge of integrating this non-deterministic intelligence into systems that require deterministic outcomes for legal and operational safety.

As we move deeper into the 2020s, the industry is reaching a tipping point where the number of possible permutations in a workflow exceeds the capacity of human architects to model them manually.

Introducing the Agent Tier: A Two-Lane Architecture

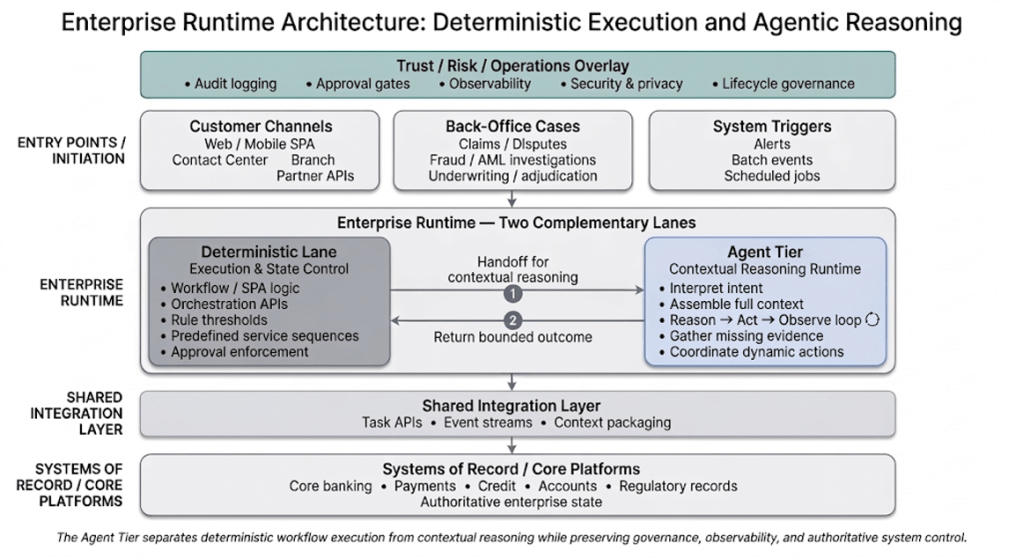

The solution emerging among leading enterprise architects is the introduction of a new architectural layer: the Agent Tier. This model suggests that the enterprise runtime should evolve into a two-lane structure that separates repeatable execution from contextual reasoning.

In this paradigm, the Deterministic Lane retains control over authoritative state changes and the enforcement of hard rules. It manages eligibility checks, applies regulatory criteria, and finalizes cases in core systems. This lane handles the "clean path"—the scenarios that are well-understood and follow predictable patterns.

The Agent Tier, by contrast, is invoked only when the workflow encounters ambiguity. This might occur when inputs are incomplete, when multiple risk signals require simultaneous interpretation, or when coordination across systems cannot be expressed through a fixed sequence. Instead of expanding a hard-coded branching tree, the system hands off the case to the Agent Tier to perform synthesis.

This separation allows for a more "elastic" workflow. The Agent Tier evaluates the available evidence, determines the next appropriate action, and returns a structured recommendation to the Deterministic Lane. Once the "ambiguity" is resolved—for instance, by gathering a missing piece of identity verification—the deterministic workflow resumes its controlled progression toward a final state.

Technical Mechanics: Reasoning, Action, and Reflection

The Agent Tier is not a "black box" of AI; it is a disciplined reasoning cycle. Technical experts point to the ReAct (Reason and Act) pattern as a foundational element of this new tier. This pattern involves an interleaved process where the system gathers evidence, reassesses its position, and proceeds incrementally.

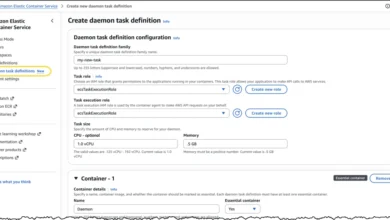

Inside the Agent Tier, the process follows a governed cycle:

- Interpretation: The system assembles the current context, including user inputs, fraud signals, and policy constraints.

- Tool Calling: The system selects an action from an approved catalog of enterprise primitives, such as "Retrieve Customer Profile," "Invoke Identity Verification," or "Request User Clarification."

- Reflection: Before returning control to the deterministic lane, the Agent Tier performs a self-check to ensure that the proposed action aligns with policy and that all required conditions for the next step are satisfied.

By using "skills"—reusable functions designed for specific objectives like KYC evidence assembly—the Agent Tier operates within defined boundaries. It does not have free-form access to the entire enterprise stack; it interacts only with governed interfaces that have explicit input/output contracts.

Supporting Data and Industry Implications

The shift toward agentic workflows is driven by significant economic pressures. According to recent industry reports, synthetic identity fraud cost U.S. financial institutions an estimated $4.6 billion in 2023. Traditional deterministic systems are often too slow to adapt to the rapidly changing tactics used by botnets and organized fraud rings.

Furthermore, the "friction tax" of rigid workflows is measurable. Data from the digital experience sector suggests that for every additional step or redundant data request in an onboarding process, conversion rates drop by approximately 5% to 10%. By implementing an Agent Tier that can dynamically "skip" unnecessary steps based on existing context, institutions can significantly reclaim lost revenue without increasing risk.

Reactions from industry leaders have been cautiously optimistic. Chief Technology Officers at several Tier-1 banks have noted that the biggest hurdle is not the technology itself, but the "governance of the probabilistic." Compliance officers, traditionally wary of AI, are finding the Agent Tier model more acceptable because it maintains the Deterministic Lane as the ultimate arbiter of state changes.

Governing the Adaptive Enterprise

Separating contextual reasoning from execution clarifies responsibility, but it does not eliminate the need for rigorous oversight. In regulated environments, the Agent Tier must operate within a "Trust and Operations Overlay." This includes comprehensive audit logging, observability, and security enforcement.

Every recommendation made by the Agent Tier must be traceable. If a system triggers an additional verification step or escalates a case to manual review, the institution must be able to reconstruct the reasoning: Which signals were used? Which tools were invoked? This level of transparency is essential for meeting regulatory requirements under frameworks like the GDPR or the emerging EU AI Act.

Moreover, human-in-the-loop (HITL) remains a vital component. High-risk actions, such as credit issuance or large-scale fund transfers, still require explicit human authorization. The Agent Tier’s role is to prepare the conditions for those decisions, ensuring the human reviewer has the most relevant and synthesized information at their fingertips.

The Path Forward for Architectural Leadership

The transition to a context-driven workflow does not require a total "rip-and-replace" of existing infrastructure. Instead, it offers a path for incremental modernization. Organizations can begin by identifying a single high-impact workflow—such as credit adjudication or dispute management—where the limitations of static branching are most painful.

As fraud models continue to evolve and customer expectations for "invisible" security grow, the need for adaptive systems will only intensify. The architectural question for the coming decade is not whether enterprises will use AI to manage workflows, but where that intelligence will reside.

By concentrating contextual evaluation within a defined Agent Tier, enterprises can move away from the "fragile branch" problem and toward a more resilient, adaptive runtime. This evolution marks a shift in the role of the architect: from a designer of fixed paths to a designer of containment boundaries and policy constraints. In this new era, the goal of digital architecture is to ensure that while the journey may be dynamic, the destination remains secure, compliant, and predictable.