Designing Agentic AI Requires a Deliberate Approach to Transparency: Mapping Decision Points for Enhanced User Trust

Designing for autonomous AI systems presents a unique challenge: users are often left in the dark about the complex processes their digital assistants undertake. This opacity can breed anxiety and erode trust, leaving individuals uncertain whether the AI has performed correctly, hallucinated, or bypassed critical steps. The prevalent responses to this dilemma—either cloaking the system in a “black box” or overwhelming users with a deluge of raw data—prove insufficient. A more nuanced and strategic approach is required to foster understanding and confidence. Victor Yocco advocates for a structured methodology, the Decision Node Audit, to map AI decision points and strategically reveal crucial moments, thereby building trust through clarity rather than noise.

The frustration stems from handing over a complex task to an AI, only for it to disappear for an extended period, eventually returning with a result. Users are left to wonder about the internal workings: Did the AI consult the necessary databases? Did it adhere to all protocols? This uncertainty typically leads to two extremes in design. The "black box" approach prioritizes simplicity by hiding all internal processes, leaving users feeling powerless and disconnected from the AI’s actions. Conversely, the "data dump" strategy floods users with every log line and API call, resulting in notification blindness. Users quickly learn to ignore the constant stream of information, which proves counterproductive when an issue arises, as they lack the necessary context to diagnose and resolve it.

A balanced approach is essential. Previous explorations into agentic AI design have highlighted interface elements like Intent Previews, which offer a glimpse into the AI’s intended actions before execution, and Autonomy Dials, which allow users to control the AI’s independent operational scope. However, knowing which elements to use is only part of the solution; understanding when to deploy them is the more significant challenge for designers. This article introduces the Decision Node Audit, a process designed to bridge the gap between backend logic and the user interface, pinpointing the precise moments when users need updates on the AI’s progress. Coupled with an Impact/Risk matrix, this method helps prioritize which decision nodes warrant visibility and guides the selection of appropriate design patterns.

Transparency Moments: A Case Study in Insurance Claims Processing

Consider Meridian, an insurance company that implemented an agentic AI to streamline the initial processing of accident claims. Users would upload vehicle damage photos and a police report. The AI would then undertake a period of processing before presenting a risk assessment and a proposed payout range. Initially, Meridian’s interface offered a generic status update: "Calculating Claim Status." This vague message bred user frustration, particularly when detailed documents were submitted. Policyholders felt uncertain whether the AI had even reviewed crucial documents like the police report, which might contain mitigating circumstances. This "black box" approach eroded user confidence.

To address this, Meridian’s design team initiated a Decision Node Audit. They discovered that the AI’s claim processing involved three distinct, probability-based steps, each with numerous embedded micro-steps. The audit revealed these key decision points:

- Initial Data Ingestion and Validation: The AI parses uploaded documents, verifying their completeness and format.

- Risk Factor Assessment: Based on damage photos and police report details, the AI calculates various risk factors. This stage involves probability estimations, such as the likelihood of pre-existing damage or contributing factors.

- Coverage Verification and Payout Range Calculation: The AI cross-references the assessed risks against the policy’s coverage terms and then determines a preliminary payout range.

Following the audit, these steps were transformed into specific "transparency moments" within the user interface. The user experience was updated to reflect this newfound clarity:

- Step 1: "Processing your claim documents. Verifying completeness and clarity." This message reassures the user that their submitted information is being actively reviewed for integrity.

- Step 2: "Analyzing accident details and assessing potential risks based on damage and police report." This explicitly communicates that the AI is delving into the specifics of the incident, a crucial step for users concerned about how mitigating factors are considered.

- Step 3: "Cross-referencing coverage with policy terms and calculating your proposed payout range." This final update clearly outlines the AI’s progress towards generating the outcome, linking the technical process directly to the user’s desired result.

While the overall processing time remained consistent, this explicit communication about the AI’s internal workings significantly restored user confidence. Users understood that the AI was diligently performing its complex task and knew precisely where to focus their attention if the final assessment appeared inaccurate. This design decision transformed a period of potential anxiety into an opportunity for connection and trust-building.

Applying the Impact/Risk Matrix: Strategic Information Disclosure

A critical outcome of the Decision Node Audit is determining which information to withhold. In the Meridian example, the backend logs generated over 50 events per claim. A naive approach might involve displaying each event as it was processed. However, applying an Impact/Risk matrix allowed for strategic pruning of this information. This matrix categorizes decision nodes based on their potential impact and associated risks:

- Low Impact/Low Risk: These are routine, low-consequence decisions. Examples include internal data formatting or minor system checks. These can typically be handled with passive notifications like toast messages or simple log entries, which are only visible if a user actively seeks them.

- Low Impact/High Risk: This category is less common but might involve a routine check that, if it fails, could have downstream implications. These might require a slightly more visible notification, perhaps a subtle alert that doesn’t interrupt workflow but flags the event for potential review.

- High Impact/Low Risk: These are decisions that have significant consequences but a low probability of failure or negative outcome. For instance, a system might be automatically adjusting a setting that has a large impact but is highly unlikely to cause problems. A confirmation step or an "undo" option after the fact is often sufficient.

- High Impact/High Risk: These are the critical decision points where transparency is paramount. They involve actions with significant consequences that could be irreversible or have a substantial negative impact if mishandled. For these, more explicit user interaction, such as an Intent Preview requiring direct confirmation, is necessary.

By strategically omitting unnecessary details, the focus shifted to the most impactful information, such as the coverage verification. This created an open interface and, more importantly, an open user experience. The underlying principle is that users feel more assured when they can witness the work being done. By exposing specific, meaningful steps like "Assessing," "Reviewing," and "Verifying," a 30-second wait transforms from a period of worry ("Is it broken?") into a tangible representation of progress ("It’s thinking").

The Decision Node Audit: Unveiling the AI’s Internal Dialogue

Transparency should be treated as a functional requirement, not merely an aesthetic choice. The common pitfall is prioritizing "What should the UI look like?" before understanding "What is the agent actually deciding?" The Decision Node Audit provides a structured way to demystify AI systems by meticulously mapping their internal processes. Its primary objective is to identify and clearly define the moments where the system deviates from deterministic rules and makes a choice based on probability or estimation. By mapping these decision structures, creators can communicate these points of uncertainty directly to users, transforming vague system updates into specific, reliable reports on the AI’s reasoning.

In a recent project involving a procurement agent tasked with reviewing vendor contracts and flagging risks, the initial interface featured a simple progress bar: "Reviewing contracts." User research revealed significant anxiety, stemming from the potential legal ramifications of overlooked clauses. A Decision Node Audit was conducted with engineers, product managers, and legal experts. The session mapped the system’s workflow, identifying "Decision Points"—moments where the AI had to choose between multiple viable options.

Unlike traditional programming, where a specific input invariably leads to a predictable output, AI systems often operate on probabilistic logic. The AI might determine that option A is the most likely best choice, but with only a 65% certainty. In the contract review system, a key decision point emerged when the AI compared liability terms against company rules. Perfect matches were rare, requiring the AI to decide if a 90% match was sufficiently robust. This critical juncture, where the AI had to make a judgment call, was previously hidden.

Once identified, this node was exposed to the user. The interface was updated from the generic "Reviewing contracts" to a more informative message: "Liability clause varies from standard template. Analyzing risk level." This specific update instilled confidence. Users understood that the AI had indeed scrutinized the liability clause, grasped the reason for any delay, and trusted that the intended action was occurring. Crucially, they knew where to direct their attention once the contract review was complete.

To effectively map an AI’s decision-making process, close collaboration among engineers, product managers, business analysts, and key stakeholders is essential. This involves visually charting the system’s operational steps and marking every instance where the process deviates based on probabilistic outcomes. These are the critical junctures where enhanced transparency is most needed.

The Impact/Risk Matrix: Prioritizing Transparency

As highlighted, an AI system can make dozens of subtle choices within a single complex task. Exposing every single one would create overwhelming noise. The Impact/Risk Matrix serves as a crucial tool for categorizing these choices:

Low Stakes / Low Impact: These are the background processes, the mundane operations that have minimal consequence if they falter or are perceived incorrectly. Examples include internal data sorting, temporary file creation, or routine status checks that don’t directly affect the user’s immediate goal. These can be safely hidden or represented by very general progress indicators.

High Stakes / High Impact: These are the critical junctures that have significant implications for the user or the overall outcome. A financial trading bot, for instance, treats a $5 trade with the same opacity as a $50,000 trade. Users would reasonably question the system’s awareness of the potential impact of transparency on large dollar amounts. In such cases, the system must pause and explain its rationale. Introducing a "Reviewing Logic" state for transactions exceeding a specific threshold, allowing the user to see the factors driving the decision before execution, becomes imperative.

Mapping Nodes to Patterns: A Design Rubric for Transparency

Once key decision nodes are identified and prioritized using the Impact/Risk Matrix, the next step is to select the appropriate UI pattern for each visible transparency moment. Drawing from established patterns like Intent Preview (for high-stakes control) and Action Audit (for retrospective safety), the decisive factor in choosing between them is reversibility.

Every identified decision node is filtered through the Impact Matrix to assign the correct pattern:

-

High Stakes & Irreversible: These nodes necessitate an Intent Preview. Because the user cannot easily undo the action (e.g., permanently deleting a critical database), transparency must occur before execution. The system must pause, clearly articulate its intent, and await explicit user confirmation. This approach prioritizes user agency in situations with significant, unrecoverable consequences.

-

High Stakes & Reversible: For nodes that have high impact but are reversible, the Action Audit & Undo pattern is suitable. For example, an AI-powered sales agent might autonomously move a lead between different pipeline stages. As long as the user is notified and an immediate "Undo" button is provided, this maintains efficiency while offering a safety net.

By rigorously categorizing decision nodes, design teams can effectively avoid "alert fatigue." The high-friction Intent Preview is reserved exclusively for truly irreversible actions, while the Action Audit ensures speed and efficiency for other critical, but reversible, operations.

| Reversible | Irreversible | |

|---|---|---|

| Low Impact | Type: Auto-Execute UI: Passive Toast / Log Ex: Renaming a file |

Type: Confirm UI: Simple Undo option Ex: Archiving an email |

| High Impact | Type: Review UI: Notification + Review Trail Ex: Sending a draft to a client |

Type: Intent preview UI: Modal / Explicit Permission Ex: Deleting a server |

Table 1: The impact and reversibility matrix informs the selection of appropriate design patterns for moments of transparency.

Qualitative Validation: The "Wait, Why?" Test

While decision nodes can be identified on a whiteboard, their effectiveness must be validated through observation of human behavior. The goal is to ensure the AI’s process map aligns with the user’s mental model. The "Wait, Why?" Test is a qualitative protocol designed for this purpose.

Users are instructed to observe the AI completing a task and to verbalize their thoughts. Whenever a user expresses confusion or uncertainty—asking questions like, "Wait, why did it do that?", "Is it stuck?", or "Did it hear me?"—a timestamp is recorded. These moments of vocalized confusion signal user disorientation and a perceived loss of control. For instance, in a study for a healthcare scheduling assistant, participants observed the AI booking an appointment. A static four-second pause on the screen consistently elicited questions like, "Is it checking my calendar or the doctor’s?"

This question highlighted a critical missing transparency moment. The four-second wait needed to be segmented into two distinct steps: "Checking your availability" followed by "Syncing with provider schedule." This small but significant change measurably reduced users’ reported anxiety levels.

Transparency fails when it merely describes a system action without connecting it to the user’s overarching goal. A message like "Checking your availability" is insufficient; it lacks context. Users understand that a calendar is being consulted, but not why. The solution lies in pairing the action with its intended outcome. The system should articulate: "Checking your calendar to find open times," followed by "Syncing with the provider’s schedule to secure your appointment." This grounds the technical process in the user’s real-world objective.

Consider an AI managing inventory for a local cafe. When a supply shortage occurs, messages like "Contacting vendor" or "Reviewing options" can induce anxiety. The manager might fear the order is being canceled or an exorbitantly priced alternative is being sourced. A more effective approach is to communicate the intended result: "Evaluating alternative suppliers to maintain your Friday delivery schedule." This clearly informs the user about the AI’s objective.

Operationalizing the Audit: Cross-Functional Collaboration

Having completed the Decision Node Audit and filtered the results through the Impact/Risk Matrix, a list of essential transparency moments is established. The subsequent step involves translating these moments into tangible UI elements, a process that necessitates robust cross-departmental collaboration. Designing transparency is not a solo endeavor confined to design tools; it requires a deep understanding of the system’s backend operations.

The process begins with a Logic Review. Designers should meet with lead system architects, bringing their decision node maps. The objective is to confirm whether the system can indeed expose the desired states. Often, the technical system may not natively expose the precise state needed for a user-facing update. Engineers might report a general "working" status, necessitating a push for more granular updates—a specific notification when the system transitions from text reading to rule checking, for example. Without this technical linkage, the design is unimplementable.

Next, the Content Design team becomes integral. While engineers provide the technical rationale for the AI’s actions, content designers craft clear, human-friendly explanations. A developer’s output might be a technically accurate but user-opaque string like "Executing function 402." A designer’s initial attempt might be overly vague, such as "Thinking." A content strategist bridges this gap, developing precise phrases like "Scanning for liability risks" that convey the AI’s activity without causing confusion.

Finally, testing the transparency of these messages is crucial. This testing should not be deferred until the final product is built. Comparative tests on simple prototypes, where only the status messages differ, can reveal significant insights. For example, presenting one user group with "Verifying identity" and another with "Checking government databases" and then asking which AI feels safer can expose how specific wording impacts user trust. The phrasing of these messages must be treated as a testable and optimizable element.

How This Changes the Design Process

Incorporating these audits fundamentally strengthens team collaboration. The traditional handoff of polished design files is augmented by a more iterative process involving messy prototypes and shared spreadsheets. The central tool becomes a "transparency matrix," a collaborative document where engineers and content designers map exact technical codes to user-facing language.

Friction during the logic review phase is common and productive. A designer might inquire how an AI decides to decline a transaction on an expense report. If the engineer responds with a generic "Error: Missing Data," the designer can advocate for more actionable information. This negotiation can lead to the engineer implementing a new rule so the system reports precisely what is missing, such as a missing receipt image.

Content designers act as vital translators. A technically accurate string like "Calculating confidence threshold for vendor matching" can be transformed by a content designer into a user-centric phrase such as "Comparing local vendor prices to secure your Friday delivery," clearly articulating both the action and its intended outcome.

Cross-functional teams participating in user testing sessions gain invaluable insights. Witnessing a user panic when a screen displays "Executing trade" can prompt a collective reevaluation. Engineers and designers can then align on improved wording, perhaps changing the text to "Verifying sufficient funds" before a stock purchase. Collaborative testing ensures the final interface serves both system logic and user peace of mind.

While incorporating these activities requires an investment of time, the outcome is a more cohesive team that communicates openly, leading to users who better understand what their AI-powered tools are doing on their behalf. This integrated approach is foundational to designing truly trustworthy AI experiences.

Trust is a Design Choice

Trust is often perceived as an emotional byproduct of a good user experience. However, it can be more effectively viewed as a mechanical result of predictable and transparent communication. Trust is cultivated by presenting the right information at the opportune moment. It is eroded by overwhelming users or by completely obscuring the underlying mechanisms.

The Decision Node Audit, particularly for agentic AI tools, is the crucial starting point. Identifying moments where the system exercises judgment, mapping these to the Risk Matrix, and then opening the "black box" to "show the work" when stakes are high, is fundamental. The subsequent article will delve into the practicalities of designing these transparency moments: crafting effective copy, structuring the UI, and managing inevitable errors when the agent makes a mistake.

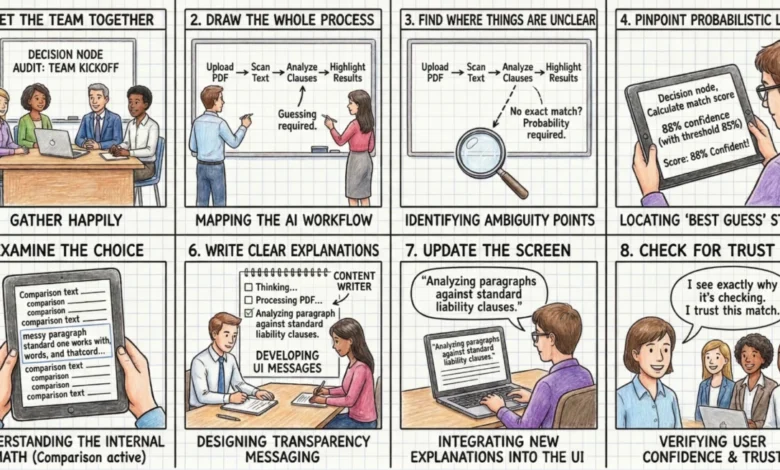

Appendix: The Decision Node Audit Checklist

Phase 1: Setup and Mapping

- Assemble the Team: Gather product owners, business analysts, designers, key decision-makers, and the engineers who developed the AI. Engineers are essential for explaining the backend logic.

- Map the Entire Process: Document every step the AI takes, from the user’s initial action to the final output. A physical whiteboard session is often most effective for this initial mapping.

Phase 2: Locating the Hidden Logic

- Identify Ambiguity Points: Review the process map for any steps where the AI compares options or inputs without a single, definitive perfect match.

- Pinpoint Probabilistic Decisions: For each ambiguous point, ascertain if the system uses a confidence score (e.g., "85% sure"). These are the AI’s final choice points.

- Examine the Decision Logic: For each choice point, determine the specific internal calculation or comparison being performed (e.g., matching a contract clause to a policy, or comparing a damaged car image to a database).

Phase 3: Creating the User Experience

- Craft Clear Explanations: Develop user-facing messages that clearly describe the specific internal action occurring when the AI makes a choice. Ground these messages in concrete reality (e.g., if an AI books a meeting, state it’s checking the reservation system).

- Update the Interface: Integrate these clear explanations into the user interface, replacing vague messages like "Reviewing contracts" with your specific descriptions.

- Ensure Trust: Verify that the new screen messages provide users with a clear rationale for any wait times or results, fostering confidence and trust. Test these messages with actual users to confirm their understanding of the achieved outcome.