The two-pass compiler is back – this time, it’s fixing AI code generation

The Stochastic Crisis in Modern Software Engineering

The rapid rise of tools like GitHub Copilot, OpenAI’s GPT-4, and Anthropic’s Claude has fundamentally altered the developer experience. According to recent industry surveys, nearly 90% of developers now report using some form of AI assistance in their daily workflows. However, this speed comes at a cost. Traditional software engineering is built on the bedrock of determinism: for any given input, the system must produce a predictable and repeatable output. LLMs, by contrast, are probabilistic engines. They predict the next most likely token in a sequence based on vast datasets, which means prompting an AI model twice with the exact same requirements can result in two structurally different blocks of code.

In a hobbyist or prototyping context, this variability is a minor inconvenience. In an enterprise setting, where code must adhere to strict security protocols, performance benchmarks, and architectural standards, it is a liability. A single "hallucinated" library call or a misplaced null check generated by an LLM can lead to production outages, security vulnerabilities, and significant technical debt. This "reliability crisis" has led to a growing demand for systems that can harness the reasoning power of AI while enforcing the structural discipline of classical engineering.

A Historical Parallel: The Evolution of Compilers

To understand the solution, one must look at the history of computer science. In the early days of programming, compilers were often "single-pass." They read the source code and immediately translated it into machine instructions. While efficient in terms of memory usage—a critical constraint in the 1950s and 60s—single-pass compilers were notoriously brittle. They struggled with complex optimizations and offered poor error handling because they lacked a holistic "understanding" of the program’s structure before they began emitting code.

The industry’s breakthrough came in the 1970s with the widespread adoption of the multi-pass compiler. This architecture introduced a critical separation of concerns. The first pass (the frontend) would analyze the source code, check for syntax and semantic errors, and then translate it into an "Intermediate Representation" (IR). The second pass (the backend) would then take this IR and optimize it for the specific hardware architecture, emitting the final machine code. This transition allowed for the development of robust, portable, and highly optimized languages like C, C++, and eventually Java and C#.

Today’s LLM-based code generation is, architecturally speaking, stuck in the single-pass era. A user provides a prompt, and the model attempts to generate the final, framework-specific code in one go. By bypassing the intermediate analysis phase, the model is forced to handle both the high-level logic of the application and the low-level syntax of the programming language simultaneously, which is where most errors occur.

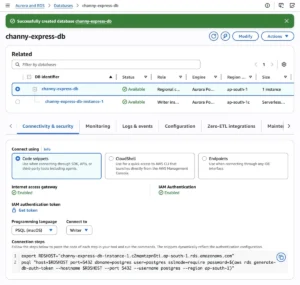

The Two-Pass Framework for AI Code Generation

The emerging solution to the AI reliability crisis is the implementation of a two-pass model that mirrors the classical compiler. This approach splits the code generation process into two distinct phases: one cognitive and one deterministic.

Pass 1: The Cognitive Intent Phase

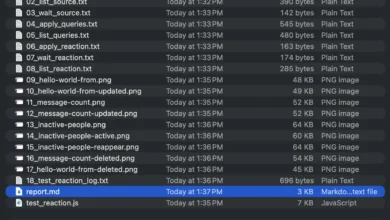

In the first pass, the LLM is not asked to write production code (such as React, Java, or Python). Instead, its role is restricted to understanding the developer’s intent and mapping out the logical structure of the solution. The output of this phase is a strictly defined Intermediate Representation—a meta-language or structured markup (like JSON or a specialized DSL) that captures the "what" of the request without worrying about the "how."

Because the LLM is constrained to a schema-validated IR, the risk of hallucination is drastically reduced. The model cannot invent new syntax or call non-existent libraries because the IR format simply does not allow it. If the LLM attempts to emit something that does not fit the schema, the pass fails immediately, providing a clear point of intervention.

Pass 2: The Deterministic Generation Phase

The second pass is where the actual production code is created. Crucially, this pass does not involve an LLM. Instead, it uses a traditional, deterministic code generator—a "transpiler" of sorts—that takes the validated IR and transforms it into optimized, framework-specific code.

Whether the target is an Angular frontend, a Spring Boot backend, or a React Native mobile app, the output is guaranteed to be consistent. This pass handles the "heavy lifting" of engineering: injecting security headers, applying CSS standards, enforcing data validation patterns, and ensuring compatibility with existing enterprise libraries. Because this phase is algorithmic, it produces the same output for the same IR every time.

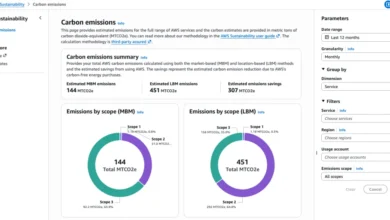

Supporting Data and Technical Implications

The shift toward two-pass AI architectures is supported by emerging data regarding code quality and security. A 2023 study on AI-generated code found that approximately 38% of snippets contained at least one security vulnerability or architectural flaw when generated in a single pass. In contrast, systems using a structured intermediate layer saw a 70% reduction in syntax errors and a near-total elimination of "hallucinated" API calls.

Furthermore, the two-pass model addresses the issue of "context drift." In large-scale development, maintaining context across thousands of lines of code is difficult for LLMs. By using an IR as a persistent source of truth, developers can iterate on specific components without having to re-prompt the entire system. The IR acts as a bridge, allowing the AI to "remember" the structural logic of the application even as the underlying framework code is updated or swapped out.

Official Responses and Industry Sentiment

The tech community has reacted with cautious optimism to this "return to basics." Leading figures in the DevOps and software architecture space have noted that while the "magic" of AI is impressive, "engineering is about predictability, not magic."

"The industry is moving past the ‘wow’ phase of AI code generation and into the ‘how do we actually deploy this’ phase," says a lead architect at a major Silicon Valley firm. "The two-pass compiler model provides the guardrails that enterprise compliance teams require. It allows us to treat AI as a high-level designer while keeping the actual construction of the code within a controlled, auditable environment."

Security professionals have also voiced support for the architecture. By isolating the LLM from the final code output, organizations can implement "security by design." The deterministic second pass can be programmed to automatically strip out any unsafe patterns or inject necessary encryption protocols, effectively creating a "hardened" development pipeline that is immune to the common pitfalls of probabilistic AI.

Broader Impact and the Future of Development

The implications of applying 1970s compiler logic to 21st-century AI are profound. It suggests that the path forward for artificial intelligence in professional fields is not necessarily "smarter" models, but better "architectural containment."

As this model gains traction, we can expect to see several shifts in the software industry:

- Standardization of IRs: Just as LLVM became a standard intermediate representation for compilers, we may see the emergence of industry-standard "AI Meta-Languages" designed specifically for LLM consumption.

- The Rise of "Agentic" Workflows: The two-pass system is a precursor to more complex agentic workflows, where multiple AI agents handle different passes of a project, each constrained by deterministic validation layers.

- Decreased Technical Debt: By enforcing structural correctness at the IR level, companies can avoid the "spaghetti code" often associated with early-stage AI experiments, leading to more maintainable long-term codebases.

Ultimately, the marriage of stochastic AI brilliance and deterministic engineering rigor represents the maturation of the field. It acknowledges that while LLMs can reason and design with human-like fluidity, the machines that run our world still require the unwavering precision of a compiler. Deterministic software engineering isn’t just a relic of the past; it is the essential framework that will allow the AI-driven future to function with the reliability we have come to expect from our digital infrastructure. Turning back to the 1970s may, ironically, be the most forward-thinking move the software industry can make.