AWS Enhances Generative AI Governance with Bedrock Cost Allocation Tools and the Debut of Claude Mythos Cybersecurity Model

Amazon Web Services (AWS) has introduced a suite of new features for Amazon Bedrock designed to address the primary hurdles facing enterprise generative artificial intelligence (AI) adoption: cost transparency, specialized security capabilities, and centralized resource governance. Chief among these updates is the launch of cost allocation for Amazon Bedrock via Identity and Access Management (IAM) users and roles, a feature that provides organizations with granular visibility into model inference spending. Alongside this financial management tool, AWS announced the preview of Anthropic’s Claude Mythos, a sophisticated AI model specifically engineered for cybersecurity, and the AWS Agent Registry, a centralized catalog for managing AI agents and tools.

These developments come at a critical juncture for the cloud industry. As organizations transition from the experimental "proof-of-concept" phase of generative AI to full-scale production, the demand for robust administrative controls has intensified. By integrating financial tracking and specialized security models into the Bedrock ecosystem, AWS aims to provide a comprehensive framework for the AI-Driven Development Lifecycle (AI-DLC).

Granular Cost Visibility for Generative AI Workloads

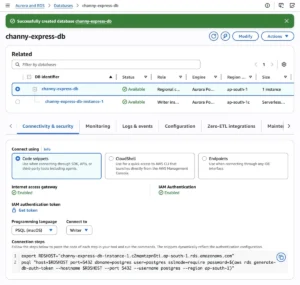

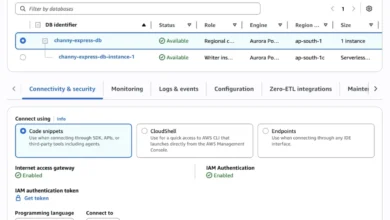

The introduction of cost allocation by IAM user and role for Amazon Bedrock marks a significant shift in how enterprises manage their cloud budgets. Previously, tracking the specific costs associated with different teams or projects within a shared AWS account was a complex manual process, often requiring custom scripts to parse through massive datasets. With this update, administrators can now tag IAM principals—the users or roles making API calls—with metadata such as "department," "cost-center," or "project-id."

Once these tags are activated within the AWS Billing and Cost Management console, the data flows seamlessly into AWS Cost Explorer and the AWS Cost and Usage Report (CUR). This allows finance leaders to see exactly how much a specific research team is spending on foundation model (FM) inference versus a customer support team running automated agents.

The timing of this release is significant. Industry data suggests that while AI adoption is surging, many CFOs remain concerned about the "black box" nature of AI spending, where token-based pricing can lead to unexpected monthly bills. By providing a clear line of sight into which internal stakeholders are driving costs, AWS is enabling companies to implement internal chargeback models and more accurately forecast their AI investments. This visibility is particularly vital for organizations deploying high-volume tools like Claude Code on Amazon Bedrock, where frequent code analysis and generation can result in significant inference traffic.

Claude Mythos: A New Frontier in Cybersecurity AI

In a strategic expansion of its partnership with Anthropic, AWS has unveiled the research preview of Claude Mythos. Accessible through "Project Glasswing," Claude Mythos represents Anthropic’s most advanced model to date, specifically optimized for high-stakes cybersecurity tasks. Unlike general-purpose large language models (LLMs) that may struggle with the intricacies of malicious code patterns or complex network architectures, Claude Mythos is designed to identify sophisticated security vulnerabilities that traditional automated scanners might miss.

The model introduces a new class of performance for analyzing massive codebases, identifying zero-day vulnerabilities, and offering remediation strategies. For security operations centers (SOCs), the model provides a powerful tool for proactive defense. It can be used to simulate adversarial attacks, audit critical infrastructure software, and provide reasoning for complex security incidents.

However, the power of Claude Mythos has prompted a controlled rollout strategy. Access is currently restricted to a curated allowlist of organizations, with AWS and Anthropic prioritizing "internet-critical" companies—such as major telecommunications providers and financial institutions—and key open-source maintainers. This gated approach reflects a growing industry trend toward "responsible AI" deployment, ensuring that highly capable security models are used for defensive purposes rather than being co-opted for malicious exploit development.

Centralized Governance through the AWS Agent Registry

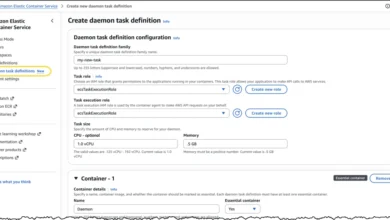

As the use of AI agents—autonomous systems that can perform tasks by calling APIs and utilizing external tools—proliferates within enterprises, organizations are facing a new challenge: "agent sprawl." To combat this, AWS has launched the Agent Registry within Amazon Bedrock AgentCore.

Currently in preview, the Agent Registry serves as a private, internal catalog where developers can discover, share, and manage AI agents, skills, and Model Context Protocol (MCP) servers. The registry functions as a "single source of truth," preventing redundant development by allowing teams to search for existing capabilities using both semantic and keyword-based queries.

Key features of the Agent Registry include:

- Discovery and Reuse: Developers can find pre-approved agents or tools that perform specific tasks, such as querying a proprietary database or processing insurance claims.

- Approval Workflows: Organizations can implement governance gates, requiring security or compliance reviews before an agent is published to the registry.

- Auditability: Every interaction with the registry is logged via AWS CloudTrail, providing a clear audit trail for compliance and troubleshooting.

- Interoperability: The registry is accessible via the AWS Management Console, CLI, and SDKs, and can even be queried directly from Integrated Development Environments (IDEs) as an MCP server.

By centralizing these resources, AWS is providing the infrastructure necessary for "Agentic Workflows," where multiple specialized AI systems work in tandem to solve complex business problems.

Chronology of AWS Generative AI Evolution

The announcements this week represent a milestone in a multi-year trajectory for AWS’s generative AI strategy.

- April 2023: AWS first announced Amazon Bedrock, positioning it as a serverless way to access foundation models from Amazon, Anthropic, AI21 Labs, and others.

- Late 2024: The focus shifted toward "RAG" (Retrieval-Augmented Generation) and knowledge bases, allowing companies to ground AI in their private data.

- 2025: AWS introduced Bedrock Agents and Guardrails, moving the platform from simple chat interfaces to functional, autonomous systems with built-in safety filters.

- March-April 2026: The current phase emphasizes the "Industrialization of AI." The launch of IAM-based cost allocation and the Agent Registry indicates that AWS is now focusing on the operational excellence and FinOps (Financial Operations) required for mature, large-scale AI deployments.

Analysis of Implications for the Enterprise

The convergence of cost management and specialized security models suggests that the cloud market is entering a phase of "Generative AI Realism." In 2023 and 2024, the primary concern for most enterprises was simply gaining access to the most powerful models. In 2026, the conversation has shifted toward sustainability, security, and ROI.

FinOps for AI: The ability to track costs at the IAM level is a direct response to the FinOps movement, which seeks to bring financial accountability to the variable spend of cloud computing. As AI inference becomes a larger portion of the IT budget, the "who, what, and where" of spending becomes non-negotiable for the C-suite.

The Cybersecurity Arms Race: The release of Claude Mythos highlights the escalating role of AI in cybersecurity. As bad actors use AI to generate more convincing phishing campaigns and automated malware, defenders must have access to models that understand the "logic" of an attack. By prioritizing internet-critical companies, AWS is positioning itself as a defender of the digital commons.

Standardization via MCP: The inclusion of Model Context Protocol (MCP) support in the Agent Registry is a significant technical detail. MCP is an open standard that allows AI models to connect to data sources and tools more easily. By backing this protocol, AWS is signaling its support for an interoperable AI ecosystem, making it easier for developers to move their agents and tools between different environments without vendor lock-in.

Broader Market Context and Official Responses

While AWS has not released specific pricing for Claude Mythos, industry analysts suggest that the model’s specialized nature and high reasoning capabilities will likely command a premium compared to general-purpose models like Claude 3.5 Sonnet.

In discussions regarding these updates, AWS representatives have emphasized that these tools are a direct result of customer feedback from "AI-DLC" workshops. These workshops, conducted throughout early 2026, revealed that while developers were excited about the capabilities of Bedrock, project managers and financial controllers felt they lacked the levers necessary to govern the technology properly.

"The goal is to remove the friction between innovation and administration," noted one AWS technical lead. "Developers want to build with the best models like Claude Mythos, but the organization needs to know that those models are being used efficiently and securely. These new features bridge that gap."

As the preview for Claude Mythos and the Agent Registry continues, AWS is expected to gather data from allowlisted organizations to refine model performance and registry governance features. For now, the message to the market is clear: the era of unmonitored AI experimentation is ending, replaced by a new standard of enterprise-grade management and specialized performance.

For organizations looking to implement these features, AWS has updated its IAM principal cost allocation documentation, providing step-by-step guides for tagging and activation. As AI continues to evolve from a novelty to a core utility, these administrative foundations will likely prove as important as the models themselves in determining the success of corporate AI strategies.