Stop Endless Debates: How Design Principles Drive Purposeful Creation in a Hype-Driven World

Design principles are far more than just rigid guidelines; they are essential tools for aligning teams around a shared vision, documenting an organization’s core values, and informing critical decision-making. In an era where rapid iteration and the allure of emerging technologies like AI can lead to superficial or inconsistent product development, well-defined design principles provide a vital anchor, ensuring that what is built is not only functional but also meaningful and aligned with a company’s ethos. This article explores the profound impact of design principles, offering insights into their establishment, their role in design systems, and their power to prevent endless debates rooted in subjective preferences.

The Imperative of Purposeful Design in the Modern Landscape

In today’s accelerated digital environment, the ability to generate passable designs and code within minutes is a reality. This ease of production, however, amplifies the need for strategic decision-making regarding what is truly worth investing time and resources into designing and building. Without a guiding framework, product development can become a series of disconnected, ad-hoc initiatives that lack coherence and fail to resonate with end-users. Much like a company’s voice and tone, if not intentionally defined, user perception will inevitably shape them, often in ways that are inconsistent or undesirable.

Design principles act as these intentional definitions. They are the agreed-upon guidelines and considerations that designers, developers, and product teams integrate into their workflow by default, minimizing the need for constant re-litigation of fundamental design choices. These principles serve as a compass, steering projects away from the pitfalls of fleeting trends, unsubstantiated assumptions, and the pressure for expedited delivery, particularly in the burgeoning field of AI.

A Key Resource for Navigating Design Principles

For those seeking to deepen their understanding and application of design principles, the online platform Principles.design by Ben Brignell stands out as an invaluable repository. This comprehensive resource curates an extensive collection of over 230 pointers, encompassing design principles and methodologies across a wide spectrum of domains, from language and infrastructure to hardware and organizational strategies. Its searchable and tagged format allows for efficient exploration and application of relevant concepts.

The Enduring Wisdom of Dieter Rams’ 10 Principles of Good Design

While numerous sets of design principles exist, the most impactful ones transcend mere aspiration. They possess a distinct point of view, clearly articulating not only what should be done but also what should be avoided. Crucially, they define what an organization or product stands for, extending beyond commercial imperatives and the pervasive noise of industry hype.

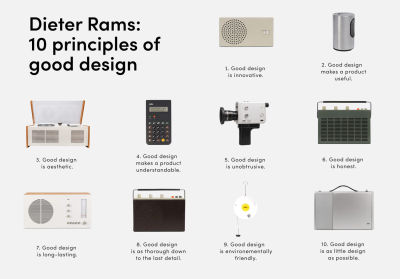

A seminal example of such impactful principles can be found in Dieter Rams’ "10 Principles of Good Design." Developed decades ago to inform and shape the design work at Braun, these principles offer a humble, practical, and tangible framework. Unlike grand, visionary pronouncements, Rams’ principles provide a clear overview of design intentions, highlighting the ambition and care embedded in the creation process. They are characterized by their honesty, sincerity, and a deeply humane approach to design.

These principles include:

- Good design is innovative: It is always evolving.

- Good design makes a product useful: It satisfies practical needs.

- Good design is aesthetic: It is visually pleasing and contributes to the emotional appeal of the product.

- Good design makes a product understandable: It clarifies the product’s structure and function.

- Good design is unobtrusive: It is neutral and restrained, leaving room for the user’s self-expression.

- Good design is honest: It does not make a product more innovative, powerful, or valuable than it really is.

- Good design is long-lasting: It avoids being fashionable and therefore never appears antiquated.

- Good design is thorough down to the last detail: Nothing must be arbitrary.

- Good design is environmentally friendly: It conserves resources and minimizes pollution.

- Good design is as little design as possible: Less, but better.

The enduring relevance of Rams’ principles underscores the timeless nature of thoughtful design. They emphasize a commitment to user needs, ethical considerations, and a profound respect for materials and longevity, offering a stark contrast to the disposable nature of much contemporary design.

Establishing Effective Design Principles: A Collaborative Endeavor

While design principles can originate from individual insights, their true power is realized when they are collaboratively established and embraced by an entire product team. It is crucial to recognize that design principles are not solely the domain of designers; they influence every facet of the user experience, from performance and customer support to overall service delivery. Therefore, ideally, representatives from all relevant areas should participate in their development.

The process of establishing design principles can, in practice, appear daunting. The abstract and often ambiguous nature of these concepts, coupled with the diverse perspectives of team members, can lead to considerable challenges in reaching consensus. However, structured approaches can demystify this process.

A widely adopted method involves a focused workshop, often drawing inspiration from established frameworks such as those proposed by Marcin Treder, Maria Meireles, and resources from Better. A typical 8-step workshop might proceed as follows:

- Define the "Why": Clearly articulate the purpose of establishing design principles and the desired outcomes. This sets the context and importance for the session.

- Brainstorming Core Values: Participants individually or in small groups brainstorm keywords and phrases that represent the team’s or organization’s core values, beliefs, and aspirations for their products.

- Affinity Mapping and Grouping: The brainstormed ideas are then organized into thematic clusters, identifying commonalities and overarching concepts.

- Drafting Principle Statements: For each significant cluster, the team collaboratively drafts concise, actionable statements that encapsulate the core idea. This often involves refining sentences to be clear, memorable, and distinctive.

- Prioritization and Selection: The drafted principles are then subjected to a prioritization exercise. This might involve dot voting, where participants allocate votes to the principles they deem most critical and representative.

- Refining and Articulating: The selected principles are further refined for clarity, conciseness, and impact. Each principle should ideally be accompanied by a brief explanation or examples to ensure consistent understanding.

- Defining "What Not To Do": For each principle, it is often beneficial to explicitly state what actions or approaches are contrary to that principle. This provides further clarity and helps avoid misinterpretations.

- Actioning and Integration Plan: The final step involves developing a plan for how these principles will be integrated into daily workflows, design systems, and decision-making processes. This ensures that the principles are not merely an academic exercise but a living guide.

Resources such as Figma community files offering design principles workshop kits and blog posts detailing practical implementation strategies provide valuable starting points for teams embarking on this journey. The "Better" blog, for instance, offers visual guides on voting for keyword groups, demonstrating how to visually represent consensus during the refinement stages.

The Role of Design Principles in Design Systems

Design principles are foundational to the successful development and evolution of design systems. A design system is a comprehensive set of reusable components, guidelines, and standards that ensures consistency and efficiency across digital products. Design principles serve as the philosophical bedrock upon which these tangible elements are built.

When design principles are clearly defined and understood, they inform every aspect of a design system:

- Component Design: Principles like "clarity" or "efficiency" will directly influence how individual UI components are designed, ensuring they are intuitive and performant.

- Content Guidelines: Principles related to "honesty" or "empathy" will shape the tone and messaging within the system, ensuring communication is transparent and user-centric.

- Accessibility Standards: Principles focused on "inclusivity" or "usability" will mandate adherence to strict accessibility guidelines.

- Decision-Making Framework: When faced with design dilemmas, the established principles provide a framework for making choices that align with the product’s overarching goals and values, rather than succumbing to personal opinions or fleeting trends.

Effectively sharing and embedding design principles within an organization is paramount. This involves not only articulating them clearly but also consistently referencing them in design reviews, project kick-offs, and even in documentation and templates. The ultimate goal is to make design principles a default consideration, ingrained in the organizational culture and workflow.

The Broader Impact: Avoiding Endless Debates and Fostering Purposeful Creation

The establishment and consistent application of design principles offer a powerful antidote to the unproductive "endless debates" that often plague creative processes. These discussions frequently arise from subjective preferences, personal taste, or differing interpretations of user needs. By grounding design decisions in pre-agreed principles, teams can shift the focus from subjective arguments to objective alignment with established values and goals.

This shift is critical because design should not be a matter of taste; it must be guided by strategic objectives and deeply held values. Design principles provide the essential framework for this guidance, ensuring that products are not only aesthetically pleasing or technically proficient but also meaningful, user-centric, and aligned with the organization’s long-term vision.

In the rapidly evolving landscape of technology, particularly with the integration of AI, the need for robust design principles is more pronounced than ever. As AI capabilities expand, the ethical considerations, user trust, and the fundamental purpose of these technologies become paramount. Well-articulated design principles will be crucial in navigating these complexities, ensuring that AI is developed and deployed in a way that benefits humanity and upholds ethical standards.

Introducing Design Patterns for AI Interfaces

Vitaly Friedman’s new video course, "Design Patterns For AI Interfaces," offers a comprehensive guide for designers seeking to navigate the unique challenges and opportunities presented by AI-driven products. This course provides hundreds of real-life examples and UX guidelines, empowering professionals to design AI features that are not only functional but also genuinely useful and adopted by users. The course also features live UX training sessions, offering an opportunity for hands-on learning and expert guidance. A free preview is available, allowing potential learners to sample the valuable content.

Useful Resources for Further Exploration:

- Principles.design: A comprehensive database of design principles and methodologies.

- Dieter Rams’ 10 Principles of Good Design: A timeless framework for thoughtful product creation.

- Figma Community: Offers various templates and kits for design principles workshops.

- Smashing Magazine Articles: Provides in-depth guides and case studies on design systems and principles.

By embracing and actively implementing design principles, organizations can foster environments of purposeful creation, ensuring that their products and services are not only innovative and efficient but also aligned with deeply held values and a clear vision for the future. This commitment to principled design is essential for navigating the complexities of the modern technological landscape and building solutions that truly resonate with users and society.