The Strategic Shift Toward Small Language Models How the Public Sector Is Navigating the AI Frontier

The global surge in artificial intelligence has placed public sector organizations at a critical crossroads. While the private sector has rapidly integrated generative AI to streamline operations and enhance customer experiences, government agencies operate under a vastly different set of mandates. For these institutions, the pressure to innovate is frequently at odds with the non-negotiable requirements of data sovereignty, national security, and strict regulatory compliance. As the initial hype surrounding massive, cloud-based Large Language Models (LLMs) begins to meet the reality of bureaucratic and operational constraints, a new architectural preference is emerging. Purpose-built Small Language Models (SLMs) are increasingly viewed not merely as a secondary option, but as the primary vehicle for operationalizing AI within high-stakes government environments.

The Divergence of Public and Private Sector AI Adoption

In the private sector, the standard blueprint for AI adoption often assumes a "cloud-first" mentality. Corporations typically rely on centralized infrastructure, continuous high-speed internet connectivity, and a willingness to transmit data to third-party providers in exchange for cutting-edge processing power. For state and federal institutions, however, these assumptions are often untenable. The fundamental need for control over sensitive information—ranging from classified intelligence to the private health records of citizens—creates a "security wall" that many commercial AI tools cannot scale.

A recent study by Capgemini highlights the depth of this concern, revealing that 79 percent of public sector executives worldwide remain wary of AI’s implications for data security. This skepticism is rooted in the legal obligations surrounding data residency and the catastrophic potential of data leaks. Han Xiao, vice president of AI at Elastic, emphasizes that government agencies must operate within highly restricted boundaries. Unlike a retail corporation that might use a public API to summarize customer reviews, a government agency must ensure that its data never leaves a controlled environment. This necessity has birthed a movement toward "sovereign AI," where the tools of innovation are kept entirely within the jurisdiction and physical control of the state.

A Chronology of the Government AI Evolution

To understand the current pivot toward SLMs, one must look at the timeline of AI integration in the public sector over the last decade.

- The Era of Rule-Based Automation (2010–2018): Early government efforts focused on basic "if-then" automation for tasks like tax processing and benefit distributions. These systems were rigid but predictable.

- The Pilot Phase and Big Data (2019–2022): Agencies began experimenting with machine learning to predict infrastructure needs and detect fraud. However, these models were often "black boxes," lacking the transparency required for public accountability.

- The Generative AI Shock (Late 2022–2023): The release of ChatGPT and other LLMs sparked a frantic interest in generative AI. Agencies launched hundreds of pilot programs, most of which remained in experimental "sandboxes" due to security concerns.

- The Shift to Specialized Architectures (2024–Present): Recognizing that massive LLMs are too resource-heavy and risky for production, agencies have begun shifting toward SLMs. This phase is characterized by a move away from general-purpose chatbots and toward task-specific, locally hosted tools.

Addressing the Infrastructure and GPU Bottleneck

One of the most significant, yet frequently overlooked, hurdles for public sector AI is the physical infrastructure required to run modern models. Large Language Models require immense computational power, typically delivered via high-end Graphics Processing Units (GPUs). In the private sector, tech giants have spent billions securing vast clusters of these chips.

The public sector, conversely, faces a distinct "GPU bottleneck." Government procurement processes are traditionally slow and designed for hardware like servers and laptops, not the specialized, high-demand chips required for LLMs. Furthermore, many agencies do not have the specialized staff required to manage and optimize GPU infrastructure. As Xiao points out, government institutions are not accustomed to the high energy and maintenance costs associated with running massive AI clusters.

This is where Small Language Models offer a pragmatic solution. While an LLM might have hundreds of billions of parameters, an SLM typically functions with between one billion and ten billion parameters. This reduction in complexity means they can often run on existing hardware or much smaller GPU clusters, significantly lowering the barrier to entry for local municipalities or smaller federal departments.

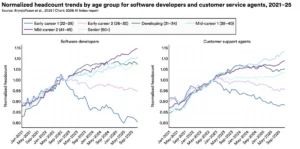

Supporting Data: The Case for Efficiency and Reliability

The move toward SLMs is supported by both performance data and executive sentiment. An Elastic survey of public sector leaders found that 65 percent struggle to utilize data continuously in real-time and at scale. Large models, while intelligent, are often slow to process specific internal data and prone to "hallucinations"—generating confident but incorrect information.

Empirical studies have shown that when a model is trained on a specific, high-quality dataset, a smaller model can match or even exceed the accuracy of a general-purpose LLM. For instance, an SLM trained exclusively on a country’s legal statutes and procurement regulations will likely outperform a massive, general model that has been trained on the entire internet, much of which is irrelevant to the task at hand.

Furthermore, Gartner predicts that by 2027, the use of small, task-specific AI models will outpace the use of general-purpose LLMs by a factor of three. This shift is driven by the realization that most government work does not require a model that can write poetry or code in Python; it requires a model that can accurately summarize a 500-page environmental impact report or cross-reference a building permit against local zoning laws.

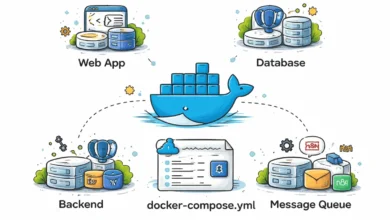

Beyond the Chatbot: Revolutionizing Government Search

A common misconception in the early days of the AI boom was that the primary value of AI lay in the "chatbot" interface. However, experts like Han Xiao argue that the true revolution for the public sector lies in search and data management. Government agencies sit atop mountains of unstructured data—scanned PDFs, handwritten notes, audio recordings of public hearings, and decades-old spreadsheets.

Modern AI, powered by SLMs, can index this mixed media to provide what is known as "verifiable source grounding." Using techniques like vector search and Retrieval-Augmented Generation (RAG), an AI system can provide an answer to a query and, crucially, cite the exact paragraph in the exact document from which the information was pulled. This transparency is vital for legal compliance and public trust.

"The public sector has a lot of data, and they don’t always know how to use this data," says Xiao. "AI can revolutionize how the government searches and manages the large amounts of data they have." By shifting the focus from "chatting" to "searching," agencies can create tools that draft complex texts, such as procurement documents or public notices, while ensuring every word is grounded in existing legal norms.

Broader Impact: Sustainability, Sovereignty, and Ethics

The implications of adopting SLMs extend beyond mere operational efficiency. There are three broader areas where this shift will define the future of governance:

1. Environmental Sustainability:

The environmental footprint of AI is a growing concern for governments committed to "Net Zero" targets. Training and running LLMs consume vast amounts of electricity and water for cooling. SLMs, being less resource-intensive, allow agencies to meet their technological goals without compromising their environmental mandates.

2. Strategic Autonomy:

In an era of geopolitical volatility, relying on AI models owned and operated by foreign corporations presents a risk to national sovereignty. By utilizing open-source SLMs that can be refined and hosted on local servers, nations can ensure that their critical cognitive infrastructure remains under their own control, immune to external service disruptions or policy changes by private companies.

3. Bias and Ethical Governance:

Massive models trained on the open internet often inherit the biases, prejudices, and misinformation present online. Because SLMs are trained on smaller, curated datasets, government data scientists have greater control over the "diet" of the AI. This makes it easier to audit the model for bias, document its decision-making process, and certify it as transparent—a requirement that is becoming law in regions like the European Union under the AI Act.

Conclusion: A Foundation of Trust

The transition from the experimental use of Large Language Models to the strategic deployment of Small Language Models marks a maturing of the AI landscape in the public sector. The focus is no longer on what AI can do in a vacuum, but on what AI must do to serve the public interest reliably and securely.

By prioritizing task-specific models that process data locally and provide verifiable results, public sector organizations are building a foundation of trust. As Han Xiao suggests, the journey does not begin with a flashy chatbot, but with the fundamental task of finding and interpreting the right information. In the high-stakes world of government operations, the "small" model is proving to be the most significant leap forward.