Maximizing Value in the Digital Frontier: The Evolution of Cloud Cost Optimization in the Era of Artificial Intelligence

Cloud computing has undergone a radical transformation over the last decade, transitioning from a novel alternative to traditional on-premises data centers to the foundational infrastructure of the global economy. As organizations increasingly migrate their most critical operations to the cloud, the focus has shifted from mere adoption to the rigorous management of expenditure. Today, cloud cost optimization is no longer viewed as a secondary operational task; it is a strategic imperative tied directly to a corporation’s fiscal health, competitive edge, and capacity for innovation. This shift has been accelerated by the rapid integration of Artificial Intelligence (AI), which presents both unprecedented opportunities for efficiency and significant challenges for budget management.

The Historical Context of Cloud Expenditure

To understand the current state of cloud cost optimization, one must examine the chronology of cloud adoption. In the early 2010s, the primary driver for cloud migration was "agility." Organizations sought to bypass the lengthy procurement cycles of physical hardware. During this "Lift and Shift" era, cost was often a secondary consideration to speed. However, as cloud footprints expanded, many enterprises encountered "bill shock"—unexpectedly high monthly invoices resulting from unmonitored resource consumption.

By the late 2010s, the concept of "FinOps" (Financial Operations) emerged. This discipline introduced a cultural shift, encouraging collaboration between finance, engineering, and business teams to take ownership of cloud spend. The focus was on eliminating waste, such as "zombie" instances (idle servers) and over-provisioned storage.

As we moved into the 2020s, the landscape shifted again. The arrival of large-scale AI and machine learning (ML) models introduced a new variable into the equation. AI workloads require massive computational power, specialized hardware like Graphics Processing Units (GPUs), and vast amounts of data storage. According to industry data from Gartner, global end-user spending on public cloud services is projected to grow 20.4% in 2024, totaling nearly $679 billion. A significant portion of this growth is attributed to the infrastructure required to support generative AI.

The AI Paradigm: A New Layer of Complexity

The integration of AI into business processes has fundamentally altered the traditional cloud optimization playbook. Unlike standard web applications, which often have predictable traffic patterns, AI workloads—particularly during the training and fine-tuning phases—are characterized by extreme bursts of high-intensity compute usage.

Specialized Hardware and Scarcity

AI relies heavily on specialized silicon, such as NVIDIA’s H100 or A100 GPUs. These resources are not only expensive but often scarce. Traditional cost optimization involved choosing the cheapest instance that could perform a task. In the AI era, optimization involves securing access to limited high-performance compute resources and ensuring they are utilized at near 100% efficiency to justify their high hourly rates.

Data Gravity and Egress Costs

AI models are only as good as the data they are trained on. Moving massive datasets into the cloud or between different cloud regions to access specific AI services can incur significant data egress fees. Strategic cost management now requires "data gravity" considerations—keeping compute resources as close to the data source as possible to minimize movement costs.

Token-Based Pricing Models

The rise of Large Language Models (LLMs) has introduced consumption-based pricing at the API level, often billed per "token." This creates a new challenge for cost predictability. A single inefficient prompt or a recursive loop in an AI agent can lead to a sudden spike in costs that traditional infrastructure monitoring tools might not catch immediately.

Strategic Best Practices for the Modern Cloud Environment

To navigate this complex landscape, organizations must adopt a multifaceted approach to cost optimization. This involves a blend of technical guardrails, financial oversight, and continuous iteration.

Enhanced Visibility and Usage Awareness

The foundation of any optimization strategy is granular visibility. Organizations must be able to attribute costs not just to a department, but to specific projects, models, or even individual developers. Modern cloud management platforms now offer AI-driven insights that can predict future spending patterns based on historical data, allowing for more accurate budgeting.

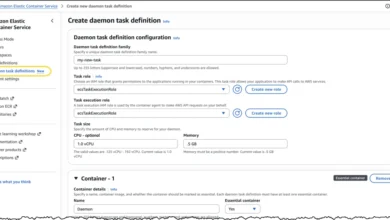

Implementing Governance Guardrails

Governance should not be viewed as a hindrance to innovation but as a framework for sustainable growth. This includes setting automated alerts for budget thresholds and implementing policies that automatically shut down non-production AI environments during off-hours. For AI specifically, governance involves setting "quota" limits on expensive GPU instances to prevent accidental overspends during experimentation.

Rightsizing and Lifecycle Thinking

Rightsizing is the process of matching resource types and sizes to your workload performance and capacity requirements at the lowest possible cost. In the context of AI, this might mean using high-cost GPUs for model training but switching to lower-cost, inference-optimized chips (like AWS Inferentia or Azure’s specialized AI CPUs) once the model is deployed in production. Lifecycle thinking also involves moving aging data to "cold storage" tiers where it costs significantly less to maintain.

Cloud Cost Management vs. Cost Optimization: A Necessary Distinction

While the terms are often used interchangeably, industry experts distinguish between "management" and "optimization."

Cloud Cost Management is the administrative side of the coin. It involves tracking, reporting, and accounting. It answers the "What happened?" and "Who spent what?" questions. It is essential for compliance and financial reporting but, on its own, it does not reduce the bill.

Cloud Cost Optimization is the proactive, action-oriented side. It uses the data provided by management tools to make architectural changes. It answers the "How can we do this better?" question. Optimization might involve re-architecting a monolithic application into microservices or switching from a pay-as-you-go model to "Reserved Instances" or "Savings Plans," which offer deep discounts in exchange for a commitment to use a certain amount of compute power over a one- or three-year period.

The Human Element: Reactions from the C-Suite

The shift toward AI-centric cloud spending has sparked varied reactions across the corporate hierarchy. Chief Financial Officers (CFOs) are increasingly concerned about the "black box" nature of AI costs. In recent surveys of Fortune 500 executives, many expressed a "cautious optimism"—they recognize the need to invest in AI to remain competitive, but they are demanding stricter ROI (Return on Investment) metrics.

Chief Technology Officers (CTOs), meanwhile, are caught between the pressure to innovate rapidly and the need to remain within budget. The consensus among technical leadership is that "efficiency is the new performance." An AI model that is 5% more accurate but 500% more expensive to run is increasingly viewed as a technical failure rather than a success.

Fact-Based Analysis of Implications

If organizations fail to master cloud cost optimization in the AI era, the implications are twofold. First, there is the risk of "innovation stagnation." When budgets are consumed by inefficient legacy spend or unmanaged AI experiments, there is less capital available for new projects. Second, there is the risk of "margin erosion." For SaaS (Software as a Service) companies, the cost of the cloud is often their largest Cost of Goods Sold (COGS). Inefficient cloud usage directly reduces profit margins, potentially impacting stock prices and investor confidence.

Furthermore, there is an environmental dimension. High cloud costs are often a proxy for high energy consumption. Data centers are massive consumers of electricity and water for cooling. By optimizing cloud spend and reducing computational waste, organizations are also contributing to their ESG (Environmental, Social, and Governance) goals by lowering their digital carbon footprint.

Looking Ahead: The Future of Optimization

As we look toward the future, the tools for cloud cost optimization are becoming as sophisticated as the workloads they manage. We are entering the era of "Autonomous FinOps," where AI agents will not only monitor spend but also make real-time adjustments to infrastructure—scaling down resources, purchasing spot instances, and migrating data—without human intervention.

The journey of cloud cost optimization is continuous. As AI adoption accelerates, the organizations that succeed will be those that treat cloud spend as a dynamic variable to be mastered, rather than a fixed cost to be endured. By aligning technical architecture with business value, enterprises can ensure that their digital transformation remains both innovative and economically sustainable.

In conclusion, the integration of AI into the cloud ecosystem has raised the stakes for cost management. The principles of visibility, governance, and rightsizing remain the bedrock of a solid strategy, but they must now be applied with a deeper understanding of the unique demands of AI workloads. The ultimate goal is not just a lower bill, but a more resilient, efficient, and value-driven organization.