The Evolution of Generative AI and the New Golden Age of Humanoid Robotics

For decades, the field of robotics was characterized by a persistent gap between the soaring ambitions of its researchers and the modest capabilities of their creations. While the ultimate goal was to replicate the versatile complexity of the human form—a machine capable of navigating the world, adapting to new environments, and interacting safely with people—the reality was often confined to rigid robotic arms in automotive plants or autonomous vacuum cleaners in living rooms. However, a fundamental shift in artificial intelligence has bridged this gap, leading to a massive surge in capital and development. In 2025 alone, companies and investors funneled $6.1 billion into the humanoid robotics sector, a fourfold increase from the $1.5 billion invested in 2024. This influx of capital signals a newfound confidence in a generation of machines that are no longer programmed with rigid rules but are instead learning to move and interact through the same data-driven models that power modern language processing.

The Paradigm Shift: From Hard-Coded Rules to Generative Intelligence

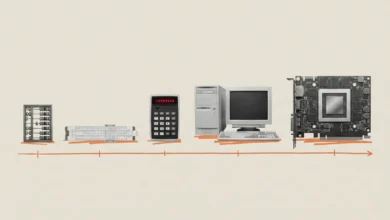

The historical struggle of robotics was rooted in the sheer unpredictability of the physical world. Traditionally, roboticists attempted to solve tasks through "explicit programming." If a developer wanted a robot to fold a shirt, they had to write thousands of lines of code accounting for every variable: the fabric’s elasticity, the identification of the collar, the exact coordinates for the gripper, and the compensatory movements needed if the shirt was slightly rotated. This approach worked well in controlled factory environments but failed miserably in the messy, chaotic reality of a human home or a dynamic warehouse.

Around 2015, the industry began a transition toward "Reinforcement Learning" (RL). Instead of writing rules, researchers built digital simulations where a virtual robot could attempt a task millions of times through trial and error. Successes were rewarded with a digital signal, and failures were penalized. While this allowed robots to master complex maneuvers—much like AI mastered the game of Go—it faced the "sim-to-real" gap, where a program that worked perfectly in a digital vacuum would fail when faced with the slight friction or lighting changes of the real world.

The true catalyst for the current boom arrived in 2022 with the emergence of Large Language Models (LLMs) like ChatGPT. These models introduced the concept of "foundation models"—AI trained on vast datasets to predict the next logical step in a sequence. Roboticists realized that just as an LLM predicts the next word in a sentence, a "Robotic Transformer" could predict the next motor command based on visual and sensor data. This conceptual shift has allowed robots to absorb pictures, joint positions, and tactile feedback to issue dozens of motor commands per second, enabling a level of adaptability previously thought impossible.

The Social Robot Precursor: The Rise and Fall of Jibo

The journey toward modern humanoid AI is paved with the lessons of early social robotics. In 2014, MIT researcher Cynthia Breazeal introduced Jibo, a stationary but expressive robot designed to be an "embodied assistant" for families. Despite raising $3.7 million in crowdfunding and attracting thousands of preorders, Jibo’s company shuttered in 2019.

In retrospect, Jibo’s failure was a failure of language and flexibility. Competing against early versions of Siri and Alexa, Jibo relied on heavily scripted interactions. When a user spoke, the software translated speech to text and pulled responses from a library of pre-approved snippets. This made the interaction feel repetitive and "robotic," lacking the fluid, context-aware conversational abilities provided by today’s generative AI.

The modern era has solved the language problem, but it has introduced new safety challenges. Today’s AI-driven social robots can carry on deep, unscripted conversations, yet they lack the "guardrails" of the old scripted systems. Reports of AI-powered toys suggesting dangerous activities to children have highlighted the risks of giving a generative model a physical presence in the home. Despite these hurdles, the integration of LLMs into robotic hardware remains a primary goal for startups aiming to solve the problem of elder care and loneliness.

Overcoming the "Sim-to-Real" Gap: OpenAI and Dactyl

By 2018, OpenAI was at the forefront of trying to move beyond scripted robotics. Their project, Dactyl, involved a human-like robotic hand tasked with manipulating a cube. To train Dactyl, OpenAI utilized "domain randomization." Instead of training the hand in one perfect simulation, they created millions of virtual worlds where every physical property—friction, gravity, lighting, and object texture—varied randomly.

This approach was designed to make the AI robust enough to handle the unpredictability of the real world. In 2019, OpenAI demonstrated Dactyl solving a Rubik’s Cube one-handed. While the success rate was inconsistent—only 60% for simple scrambles and 20% for difficult ones—it proved that simulation could be used to teach complex motor skills.

OpenAI briefly shuttered its internal robotics team in 2021 to focus on pure software, but the company has recently re-entered the fray. Reports indicate that OpenAI is now partnering with hardware manufacturers to apply its latest GPT-level reasoning to humanoid forms, moving beyond the limitations of the Dactyl hand toward full-body coordination.

The Foundation Model Revolution: Google DeepMind’s RT Series

While OpenAI focused on simulation, Google DeepMind explored the power of massive data collection in the real world. In 2022, the team spent 17 months recording humans performing 700 different tasks using robot controllers. This data formed the basis for RT-1 (Robotic Transformer 1), a foundation model that could translate visual inputs and text instructions into motor actions.

The successor, RT-2, represented a massive leap forward by incorporating vision-language data from the internet. By training on general images and text, the robot developed a "common sense" understanding of the world. For the first time, a robot could understand abstract commands such as "put the Coke can near the picture of Taylor Swift," even if it had never been specifically trained on that task. This ability to generalize—to apply knowledge from the internet to physical movements—is the cornerstone of the 2025 robotics revolution.

In early 2025, Google DeepMind further integrated these capabilities with its Gemini models, allowing robots to process natural language commands with a nuance that mimics human reasoning. This has transformed robots from tools that require specific commands into "coworkers" that can interpret intent.

From Theory to the Warehouse: Covariant and Industrial AI

While humanoid robots capture the public imagination, the most immediate economic impact is being felt in the logistics sector. Covariant, a startup founded by former OpenAI engineers, focused on the pragmatic task of warehouse "picking." By 2024, the company released RFM-1 (Robotics Foundation Model 1), a system that allows robotic arms to function with a high degree of autonomy.

Unlike traditional warehouse robots that require items to be placed in exact positions, Covariant’s model uses cameras and sensors to identify and pick up objects of any shape or size. Crucially, the model allows for two-way communication. A robot might predict that it cannot get a secure grip on a specific item and "ask" a human supervisor for advice on which suction cup to use.

The acquisition of Covariant’s leadership by Amazon in 2024 underscores the massive industrial stakes. Amazon, which operates over 1,300 warehouses in the U.S., is aggressively integrating these foundation models to automate the "induction" process—placing items onto sorters and conveyors—a task that has traditionally been labor-intensive and prone to human fatigue.

The Humanoid Frontier: Agility Robotics and the Digit Model

The culmination of these technological threads is the humanoid robot, a machine designed to operate within human-centric infrastructure. Leading this charge is Agility Robotics with its "Digit" robot. Unlike the sleek, humanoid designs found in science fiction, Digit is a functional machine with bird-like legs and an unadorned head, optimized for the task of moving shipping totes.

Digit is currently being piloted by industry giants including Amazon, Toyota, and GXO Logistics. These companies view humanoids not as a novelty, but as a solution to chronic labor shortages in the logistics industry. Because Digit can walk into an existing warehouse, navigate stairs, and work alongside humans, it eliminates the need for expensive facility retooling.

However, significant challenges remain. Digit can currently lift only 35 pounds, and increasing its strength requires heavier batteries, which in turn reduces its operational window. Furthermore, as these robots move out of "test zones" and into active workspaces, they face a complex regulatory landscape. Standards organizations are currently drafting new safety protocols for mobile humanoids to ensure they do not pose a risk to their human counterparts.

Economic Implications and the Path Forward

The $6.1 billion invested in 2025 is a testament to the belief that the "robotics winter" is over. Analysts suggest that the convergence of generative AI, improved battery density, and cheaper sensor technology has created a "perfect storm" for the industry. Goldman Sachs recently projected that the market for humanoid robots could reach $38 billion by 2035, potentially becoming as ubiquitous as the automobile.

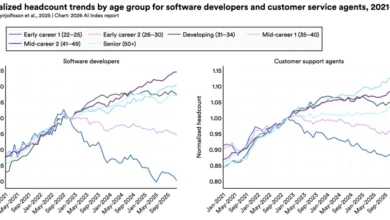

The implications for the global workforce are profound. While proponents argue that robots will take over "dull, dirty, and dangerous" jobs, easing labor shortages in aging societies, critics warn of the potential for significant economic displacement. The transition will likely require a reimagining of labor laws and social safety nets as "wage-free labor" becomes a viable option for large-scale enterprises.

As Silicon Valley roboticists dream big once again, the focus has shifted from "can we build it?" to "how fast can we scale it?" The journey from the rule-based arms of the 1980s to the generative humanoids of 2025 represents one of the most significant technological pivots in history. The machines are finally learning to see, speak, and move, marking the beginning of an era where the boundary between digital intelligence and physical action permanently dissolves.