Meta Learning Phase A Deep Dive

Meta learning phase, a crucial step in the development of machine learning models, focuses on learning how to learn. It involves designing algorithms that can adapt and improve their learning process through experience, rather than just learning from a single dataset. This approach allows for more efficient and effective learning, especially when dealing with limited or complex data.

This comprehensive guide delves into the intricacies of the meta learning phase, covering everything from defining the concept and exploring different methods and techniques to understanding data considerations, model design, evaluation metrics, real-world applications, and the challenges associated with this powerful approach.

Defining the Meta-Learning Phase

The meta-learning phase represents a crucial step in the broader field of machine learning. It stands apart from traditional learning methods by focusing on learninghow* to learn, rather than directly learning a specific task. This approach is gaining significant traction as it allows for more efficient and adaptable learning models, particularly in complex and dynamic environments.This phase delves into the optimization of learning algorithms themselves, enabling them to perform better across various tasks.

The meta learning phase is all about figuring out the best way to learn, right? It’s like practicing how to learn! This is crucial for machine learning models to adapt quickly to new data, and a powerful tool for that is using Google Data Studio. Google Data Studio helps visualize and analyze the data used in this meta learning process, allowing you to identify trends and patterns that could significantly improve your model’s performance.

Ultimately, this refined approach in the meta learning phase leads to more effective and adaptable AI systems.

Understanding the meta-learning phase requires a clear grasp of its relationship with meta-learning and how it distinguishes itself from conventional learning.

Definition of the Meta-Learning Phase

The meta-learning phase is the stage within a meta-learning process where a model learns to optimize its own learning process. This involves acquiring knowledge about different learning algorithms and their performance characteristics. It’s not about mastering a specific task, but rather mastering the skill of learning itself. This process, often referred to as learning-to-learn, aims to improve the efficiency and effectiveness of subsequent learning tasks.

In essence, meta-learning is the overall goal, while the meta-learning phase is the specific process of achieving that goal.

Relationship Between Meta-Learning and the Meta-Learning Phase

Meta-learning is the overarching concept of enabling machines to learn how to learn. The meta-learning phase is a critical component of this process. It encompasses the actual execution of learning algorithms, allowing the model to adapt and refine its learning strategies. The relationship is akin to a chef (meta-learning) meticulously crafting recipes (learning algorithms) for preparing various dishes (tasks).

The meta-learning phase is the stage where the chef practices and refines these recipes.

Key Characteristics of the Meta-Learning Phase

The meta-learning phase is distinguished from other learning phases by its focus on learningstrategies* rather than specific data points. It’s a process of building a model that can adapt its learning method to different scenarios. This adaptability is crucial for real-world applications where data distributions may shift or new tasks arise.

- Focus on Learning Strategies: Unlike traditional learning, the meta-learning phase emphasizes the process of learning itself. It’s about acquiring the knowledge and skills needed to learn quickly and effectively, rather than accumulating specific knowledge about a particular task.

- Adaptability and Generalization: Meta-learning models aim to generalize well across diverse tasks. This contrasts with traditional learning where the model often performs well only on the specific data it was trained on.

- Optimization of Learning Algorithms: The meta-learning phase often involves tuning hyperparameters and architectures of learning algorithms. This process is crucial for maximizing the model’s ability to learn efficiently across different tasks.

Comparison of Meta-Learning and Traditional Learning

The table below highlights the key distinctions between meta-learning and traditional learning.

| Feature | Meta-Learning Phase | Traditional Learning Phase |

|---|---|---|

| Goal | Learn how to learn | Learn a specific task |

| Input Data | Multiple datasets representing diverse tasks | Data specific to a single task |

| Output | A model capable of quickly adapting to new tasks | A model that performs well on the trained task |

| Focus | Learning strategies, algorithms, and hyperparameters | Learning patterns and relationships within the data |

Methods and Techniques

Meta-learning, in essence, is about learning to learn. This involves developing algorithms that can quickly adapt to new tasks, rather than needing to be retrained from scratch each time. Crucial to this process are the methods and techniques employed during the meta-learning phase. These methods and techniques are designed to optimize the meta-learning model’s ability to generalize across diverse tasks.Understanding these methods is essential for effectively harnessing the power of meta-learning, enabling the development of more robust and adaptable machine learning systems.

The meta learning phase is all about figuring out the best ways to learn, and that’s super important for AI. This translates directly into how a company approaches its digital presence, like digital pr and seo. Understanding how to optimize your online strategy is key to success in the modern market, and that’s something the meta learning phase aims to replicate for effective learning processes in general.

Different approaches to meta-learning employ various strategies for learning from a set of tasks, allowing the model to extrapolate its knowledge and quickly master new tasks.

Common Meta-Learning Methods

Meta-learning methods can be broadly categorized into model-based and model-agnostic approaches. Model-based methods focus on learning a model of the learning process itself, while model-agnostic methods adapt existing models for improved performance on new tasks. Understanding these approaches is crucial to selecting the right technique for a given problem.

- Model-Based Methods: These methods involve learning a model of the learning process. This model can then be used to predict how well a learner will perform on a new task, allowing for optimization and adaptation. This approach often leads to more efficient learning on unseen tasks.

- Model-Agnostic Methods: These techniques focus on adapting existing machine learning models to improve their performance on new tasks. They don’t require learning a separate model of the learning process. This method is often faster and more practical when a large amount of data is available.

Optimization Techniques

Optimizing meta-learning models during the meta-learning phase is crucial for achieving good performance on unseen tasks. Various optimization techniques are used to improve the meta-learner’s ability to generalize.

- Gradient Descent Methods: Commonly used for finding the minimum of a loss function. These methods are employed to update the meta-learner’s parameters in order to minimize the loss across the set of tasks. Different variations of gradient descent, such as stochastic gradient descent (SGD), are employed to balance speed and accuracy.

- Meta-Gradient Descent: This approach involves computing gradients with respect to the learning algorithm’s parameters. This allows the model to adapt its learning process to different tasks. This method is often used for model-based meta-learning methods.

- Reinforcement Learning: Reinforcement learning methods can be incorporated into meta-learning. The meta-learner can be viewed as an agent interacting with an environment, which represents the task space. The agent learns policies for optimizing its performance across a variety of tasks.

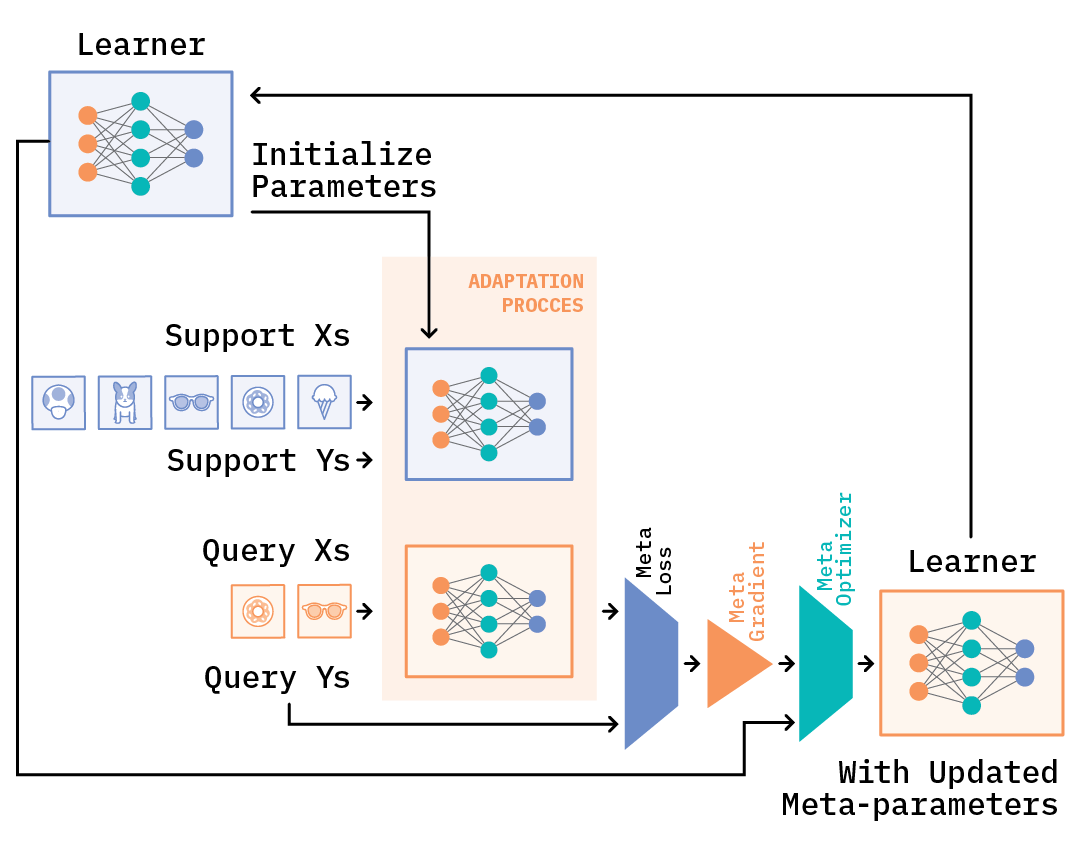

Implementing a Meta-Learning Method (Example: MAML)

Model-Agnostic Meta-Learning (MAML) is a popular meta-learning method. Here are the steps involved in implementing it:

- Define the task set: Create a collection of tasks. Each task consists of a training set and a test set.

- Initialize the base learner: Choose a base machine learning model (e.g., a neural network).

- Perform a few gradient updates for each task: This involves updating the base learner’s parameters for a small number of steps on each task’s training set. This step is crucial for adaptation to new tasks.

- Compute the loss: Calculate the loss on the corresponding test set. This measures how well the adapted base learner performs on unseen data.

- Optimize the meta-learner: Use an optimization algorithm (like gradient descent) to adjust the meta-learner’s parameters. The goal is to minimize the loss across all tasks.

Meta-Learning Algorithms and Applications

| Algorithm | Description | Applications |

|---|---|---|

| MAML | Model-Agnostic Meta-Learning; learns a model that quickly adapts to new tasks by performing a few gradient updates on each task. | Few-shot learning, image classification, natural language processing. |

| Model-Based Meta-Learning | Learns a model of the learning process itself. | Robotics, reinforcement learning, personalized learning systems. |

| Prototypical Networks | Learns prototypes for different classes to facilitate fast classification on new tasks. | Few-shot image classification, object recognition. |

Data Considerations

Data is the lifeblood of any machine learning model, and meta-learning is no exception. Choosing the right data, preparing it effectively, and understanding its nuances are critical to success. The meta-learning process relies heavily on datasets that can generalize well across various tasks, providing insights into learning patterns rather than just specific task solutions.The quality and quantity of data directly impact the performance and robustness of meta-learning models.

The meta-learning phase is all about figuring out the best way to learn, right? It’s like a masterclass in learning itself. Recently, I came across a fascinating profile of Archana Agrawal, CMO at Airtable, who’s been named Marketer of the Week here. Her insights into digital marketing strategies definitely highlight the importance of continuous learning in this rapidly evolving field, which is so closely related to the meta-learning process itself.

Proper preprocessing and careful selection of datasets are paramount to extracting the most value from the data and achieving desired outcomes. Handling diverse data formats is also a key aspect, ensuring that all information is usable and consistent for the meta-learning algorithms.

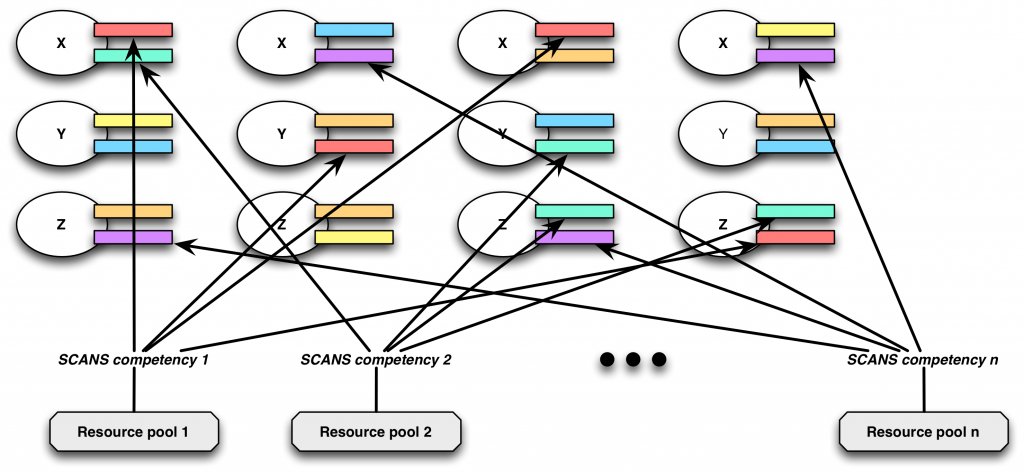

Types of Data Used in Meta-Learning

Meta-learning algorithms often use datasets composed of multiple tasks, each with its own training and testing data. These datasets represent a collection of learning experiences, allowing the model to learn from diverse scenarios and generalize better to new tasks. The data typically comprises a set of source tasks, and a separate set of target tasks. The source tasks are used for training the meta-learner, while the target tasks evaluate its performance.

Different tasks within the dataset should represent a wide range of challenges to promote robust generalization. Examples include image classification, natural language processing, and reinforcement learning tasks. The specific types of data depend on the meta-learning task.

Importance of Data Preprocessing

Data preprocessing is crucial in meta-learning to ensure data quality and consistency. It involves transforming raw data into a suitable format for meta-learning algorithms. This includes cleaning the data by handling missing values, outliers, and inconsistencies. Data normalization or standardization is also necessary to prevent features with larger values from dominating the learning process. Preprocessing ensures that all tasks in the dataset are on a similar scale, which is vital for accurate and unbiased meta-learning.

Without proper preprocessing, the meta-learner might focus on the characteristics of a specific task or dataset instead of learning generalizable patterns, leading to poor performance on unseen tasks.

Handling Different Data Formats

Meta-learning datasets can include various data formats, such as tabular data, images, text, and time-series data. Handling these diverse formats requires specific preprocessing techniques. For instance, tabular data might need feature scaling, while image data might require resizing or augmentation. Text data could benefit from vectorization techniques like TF-IDF or word embeddings. Proper format conversion and transformation are essential for ensuring compatibility across different tasks.

Each data type has unique preprocessing steps, and these need to be carefully considered.

Selecting Datasets for Meta-Learning

Selecting suitable datasets is critical for meta-learning. Datasets should be representative of the types of tasks the meta-learner will encounter. Datasets with a large number of tasks and diverse characteristics generally lead to better generalization. Consideration should be given to the distribution of data points within each task, ensuring a balance in difficulty and representation. The dataset should cover a wide range of complexities and characteristics.

For instance, datasets for image classification should include various objects, backgrounds, and lighting conditions.

Preparing Data for Meta-Learning Algorithms

The specific preparation steps for meta-learning algorithms depend on the chosen algorithm. For example, some algorithms might require specific data structures, while others may need data to be presented in a particular format. Common preprocessing steps include data splitting into training and validation sets for the meta-learner, and into training and testing sets for each task. The data should be formatted in a way that allows the algorithm to effectively learn from the various tasks.

Properly structured data ensures the algorithm can identify generalizable patterns and learn efficiently. This often involves defining appropriate metrics for measuring performance on each task and creating a representation that encapsulates the task’s key characteristics.

Model Design and Implementation

Designing and implementing meta-learning models requires careful consideration of various factors, including the choice of architecture, the dataset characteristics, and the desired performance metrics. The process involves selecting a suitable model structure, adapting it for meta-learning tasks, and ensuring efficient training and evaluation. Successful meta-learning model design hinges on understanding the intricacies of the target task and the characteristics of the training data.Meta-learning model design involves selecting an architecture capable of learning effective strategies for quickly adapting to new tasks.

This adaptability is crucial for the model’s success in meta-learning. Careful consideration of the task structure, the data characteristics, and the desired performance metrics are paramount in choosing the right architecture.

Choosing Appropriate Architectures

Selecting the right architecture for a meta-learning model is a crucial step. The architecture needs to balance the need for generalization across tasks with the ability to efficiently learn task-specific knowledge. Factors like the complexity of the tasks, the size of the datasets, and the computational resources available influence the choice. A model capable of quickly adapting to new tasks and generalizing effectively across various tasks is highly desirable.

Steps Involved in Implementing Meta-Learning Models

Implementing meta-learning models involves several key steps:

- Dataset Preparation: Carefully prepare the dataset, ensuring appropriate representation of the meta-learning tasks and appropriate task-specific features. This includes splitting the data into meta-training and meta-validation sets for model evaluation and hyperparameter tuning. Data augmentation techniques might be employed to enhance the dataset’s representativeness and robustness. This process is critical for model generalizability.

- Model Selection: Choose an appropriate model architecture based on the characteristics of the meta-learning task and the available resources. Consider architectures like neural networks, support vector machines, or other suitable models for the given task.

- Hyperparameter Tuning: Carefully tune hyperparameters to optimize model performance on the meta-validation set. This stage often involves using techniques like grid search or random search to find optimal hyperparameter values. The hyperparameters significantly impact the model’s ability to learn effective strategies across tasks.

- Meta-Training: Train the meta-learning model on the meta-training set. This phase aims to learn a strategy for quickly adapting to new tasks. The training process should be monitored carefully, ensuring convergence and stability.

- Evaluation: Evaluate the trained meta-learning model on the meta-validation and meta-test sets to assess its performance on unseen tasks. The evaluation metrics should reflect the specific requirements of the meta-learning task.

Comparing and Contrasting Model Architectures

Different architectures have varying strengths and weaknesses. The selection depends heavily on the characteristics of the meta-learning task. Some popular architectures include:

- Model-Agnostic Meta-Learning (MAML): MAML learns a model that quickly adapts to new tasks by updating the parameters of the base model on a small number of examples from the new task. This approach is relatively simple to implement.

- Meta-Network: A meta-network is a model that learns a function to produce parameters for models on new tasks. This approach is more complex but can potentially achieve better performance on diverse tasks.

- Learning to Learn: The learning-to-learn framework explicitly focuses on learning how to learn. This often involves hierarchical models, which can be beneficial for complex tasks.

Summary of Model Architectures

| Architecture | Pros | Cons |

|---|---|---|

| Model-Agnostic Meta-Learning (MAML) | Relatively simple to implement, effective on many tasks | Might not perform optimally on very complex tasks |

| Meta-Network | Potentially better performance on complex tasks, more flexible | More complex to implement, computationally more expensive |

| Learning to Learn | Potentially better performance for hierarchical or complex tasks | More complex to implement, computationally demanding |

Evaluation Metrics

Evaluating meta-learning models requires careful consideration of appropriate metrics. Simply achieving high accuracy on a few tasks isn’t enough; we need to assess how well the model generalizes across diverse tasks and datasets. The chosen metrics must reflect the meta-learning paradigm, focusing on the model’s ability to learn quickly and efficiently from few examples. This section delves into crucial evaluation metrics and their significance in meta-learning.

Key Metrics for Meta-Learning Performance

Meta-learning, unlike traditional machine learning, focuses on learninghow* to learn. Therefore, evaluating its performance requires metrics that capture the efficiency and generalization ability of the learned learning algorithm. Metrics like accuracy, but also measures of learning time and efficiency are vital.

Accuracy and its Limitations

Accuracy, a common metric, measures the percentage of correctly classified instances. However, in meta-learning, a single accuracy number on a set of tasks can be misleading. A model might perform well on a specific set of tasks but fail to adapt to novel, unseen tasks. Therefore, a more nuanced approach is needed to truly assess the effectiveness of a meta-learning model.

Few-Shot Learning Performance

A crucial aspect of meta-learning is its ability to perform well with limited training data (few-shot learning). Metrics that specifically measure performance under these conditions are vital. For example, considering the number of examples required for the model to reach a certain level of accuracy provides valuable insights.

Meta-Accuracy and Task-Specific Metrics

Meta-accuracy, also known as meta-validation accuracy, is a key metric for evaluating the overall performance of the meta-learning model. It is calculated by averaging the performance of the model across various tasks. Alongside meta-accuracy, task-specific metrics, like accuracy, precision, and recall, can be evaluated on each individual task to understand the model’s strengths and weaknesses across a variety of tasks.

Learning Efficiency and Generalization

Beyond accuracy, assessing the efficiency of the learned algorithm is critical. Metrics like the time required for the model to adapt to new tasks, or the number of training examples needed for satisfactory performance are key indicators of a model’s effectiveness. Furthermore, generalization ability—how well the learned algorithm adapts to new, unseen tasks—is a critical aspect of meta-learning performance, requiring separate evaluation.

Table of Evaluation Metrics

| Metric | Description | Applications |

|---|---|---|

| Meta-Accuracy | Average accuracy across multiple tasks. | Overall performance assessment of the meta-learning model. |

| Few-Shot Accuracy | Accuracy achieved with a limited number of training examples per task. | Evaluating the model’s ability to learn from limited data. |

| Task-Specific Accuracy | Accuracy on individual tasks. | Identifying tasks where the model excels or struggles. |

| Learning Time | Time taken for the model to adapt to a new task. | Evaluating the efficiency of the learned learning algorithm. |

| Generalization Ability | Performance on novel, unseen tasks. | Assessing the model’s ability to adapt to new data distributions. |

Real-World Applications

Meta-learning, the art of learning to learn, is rapidly finding its way into diverse real-world applications. Its ability to accelerate learning in complex domains and tackle tasks with limited labeled data is a powerful tool for tackling challenging problems. This section will explore practical scenarios where meta-learning is successfully implemented and the specific industries benefiting from this powerful technique.

Applications in Robotics

Meta-learning is proving valuable in robotics, particularly in scenarios requiring rapid adaptation to new environments or tasks. For example, a robot trained with meta-learning can quickly learn to manipulate novel objects without extensive prior experience with each specific object. This capability is crucial for tasks like warehouse automation or assistive robotics where the robot needs to handle various items with differing shapes and sizes.

The robot learns the general principles of manipulation through a diverse set of training experiences, allowing it to generalize its knowledge to new situations.

Applications in Natural Language Processing

Meta-learning is revolutionizing natural language processing (NLP). In tasks like question answering or text summarization, meta-learning models can adapt to specific datasets and domains more efficiently than traditional methods. For instance, a meta-learning model trained on a vast collection of question-answer pairs could quickly learn to answer questions in a new, previously unseen domain by leveraging its knowledge from prior experiences.

This adaptation is particularly important for handling specialized domains, such as medical texts or legal documents, where domain-specific vocabulary and phrasing require tailored learning.

Applications in Computer Vision

Meta-learning shines in computer vision applications requiring quick adaptation to variations in data or tasks. Imagine a self-driving car encountering a new type of traffic sign. A meta-learning model can quickly recognize and classify the sign by leveraging prior experience with similar shapes and patterns. Similarly, meta-learning can be used to improve object recognition in images with varied lighting conditions, angles, or occlusions.

This rapid adaptation is essential for maintaining performance in dynamic and unpredictable environments, such as urban driving conditions.

Industries Benefiting from Meta-Learning

Meta-learning is proving beneficial across numerous industries:

- Robotics: Meta-learning enables robots to adapt to new tasks and environments quickly, crucial for automation in manufacturing, warehousing, and healthcare.

- Healthcare: Meta-learning can aid in medical diagnosis and treatment by learning from diverse patient data, accelerating the learning process and potentially improving diagnostic accuracy.

- Finance: Meta-learning can be used to detect fraudulent transactions and predict market trends by quickly adapting to changing patterns in financial data.

- E-commerce: Meta-learning models can personalize recommendations for products and services, enhancing customer experience and increasing sales conversion rates.

- Customer Service: Meta-learning can improve the efficiency and effectiveness of chatbots and virtual assistants by enabling them to quickly learn new customer interactions and adapt to different communication styles.

Challenges and Limitations: Meta Learning Phase

Meta-learning, while promising, faces several hurdles that limit its widespread adoption and effectiveness. These challenges stem from the inherent complexities of learning to learn, requiring careful consideration and addressing potential pitfalls. Understanding these limitations is crucial for developing robust and reliable meta-learning systems.The core difficulty lies in the inherent need for a large quantity of diverse data for both meta-training and meta-testing.

Effective meta-learning models require substantial resources, often exceeding those available for traditional machine learning tasks. Furthermore, evaluating the performance of meta-learning models can be tricky, as the metrics used often need to be carefully tailored to reflect the specific learning task being addressed.

Data Scarcity and Quality, Meta learning phase

The effectiveness of meta-learning heavily relies on the quality and quantity of data used for meta-training. Insufficient or poorly represented data can lead to suboptimal performance in the target learning task. This issue is exacerbated by the fact that creating datasets tailored for meta-learning can be significantly more challenging than creating datasets for traditional machine learning.

- Limited availability of labeled data for diverse learning tasks often hinders the development of generalizable meta-learning models. For example, if a meta-learning model is trained on a dataset of image classification tasks with a limited set of classes, its performance on a new task involving a completely different set of classes could be significantly impacted.

- The quality of the meta-training data can directly affect the generalization capabilities of the meta-learner. Noisy or inconsistent data can lead to inaccurate or biased results.

- Creating synthetic data to address data scarcity can be problematic. While synthetic data can be useful, it needs to be carefully generated to reflect the nuances of the target learning tasks, which can be a challenging task in itself.

Model Complexity and Interpretability

Meta-learning models can be intricate, making them difficult to interpret and debug. The complexity of these models can obscure the reasons behind their decisions, which can hinder their application in domains where transparency is crucial.

- The black-box nature of many meta-learning models makes it challenging to understand how they learn to learn. This lack of transparency can be problematic when deploying meta-learning models in real-world scenarios.

- Overfitting to the meta-training data is a significant concern. A meta-learning model that performs exceptionally well on the meta-training data may fail to generalize to unseen data, thus underperforming on meta-test data.

Evaluation Challenges

Evaluating the performance of meta-learning models presents unique challenges. Traditional machine learning metrics may not be sufficient for assessing meta-learning performance, and new metrics need to be developed and validated.

- Defining appropriate evaluation metrics for meta-learning models is essential. A common metric, for example, might be the average performance across multiple learning tasks. This needs to be tailored to the specific tasks and datasets used.

- The evaluation process should consider the variability in the performance of meta-learning models across different learning tasks, and account for the inherent difficulty of each task.

- A meta-learning model may perform well on some tasks but poorly on others. The evaluation should provide a holistic view of the model’s capabilities and limitations across a spectrum of tasks.

Computational Cost

Training and deploying meta-learning models can be computationally expensive, requiring significant resources in terms of time and computational power.

- The computational cost of meta-learning can be substantial, especially when dealing with large datasets and complex models. This can hinder the practicality of applying meta-learning in resource-constrained environments.

- The need for specialized hardware and software can further increase the computational demands of meta-learning.

Ultimate Conclusion

In conclusion, the meta learning phase represents a significant advancement in machine learning, offering a powerful framework for creating highly adaptable and effective models. By understanding its various components and applications, we can unlock the full potential of this transformative approach to machine learning. Further research and development will undoubtedly pave the way for even more sophisticated and innovative solutions.